ChatGPT’s means to disregard copyright and customary sense whereas creating photos and deepfakes is the speak of the city proper now. The picture generator mannequin that OpenAI launched final week is so broadly used that it’s ruining ChatGPT’s fundamental performance and uptime for everybody.

Nevertheless it’s not simply developments in AI-generated photos that we’ve witnessed just lately. The Runway Gen-4 video mannequin permits you to create unbelievable clips from a single textual content immediate and a photograph, sustaining character and scene continuity, in contrast to something we have now seen earlier than.

The movies the corporate supplied ought to put Hollywood on discover. Anybody could make movie-grade clips with instruments like Ruway’s, assuming they work as supposed. On the very least, AI will help cut back the price of particular results for sure films.

It’s not simply Runway’s new AI video device that’s turning heads. Meta has a MoCha AI product of its personal that can be utilized to create speaking AI characters in movies that is likely to be adequate to idiot you.

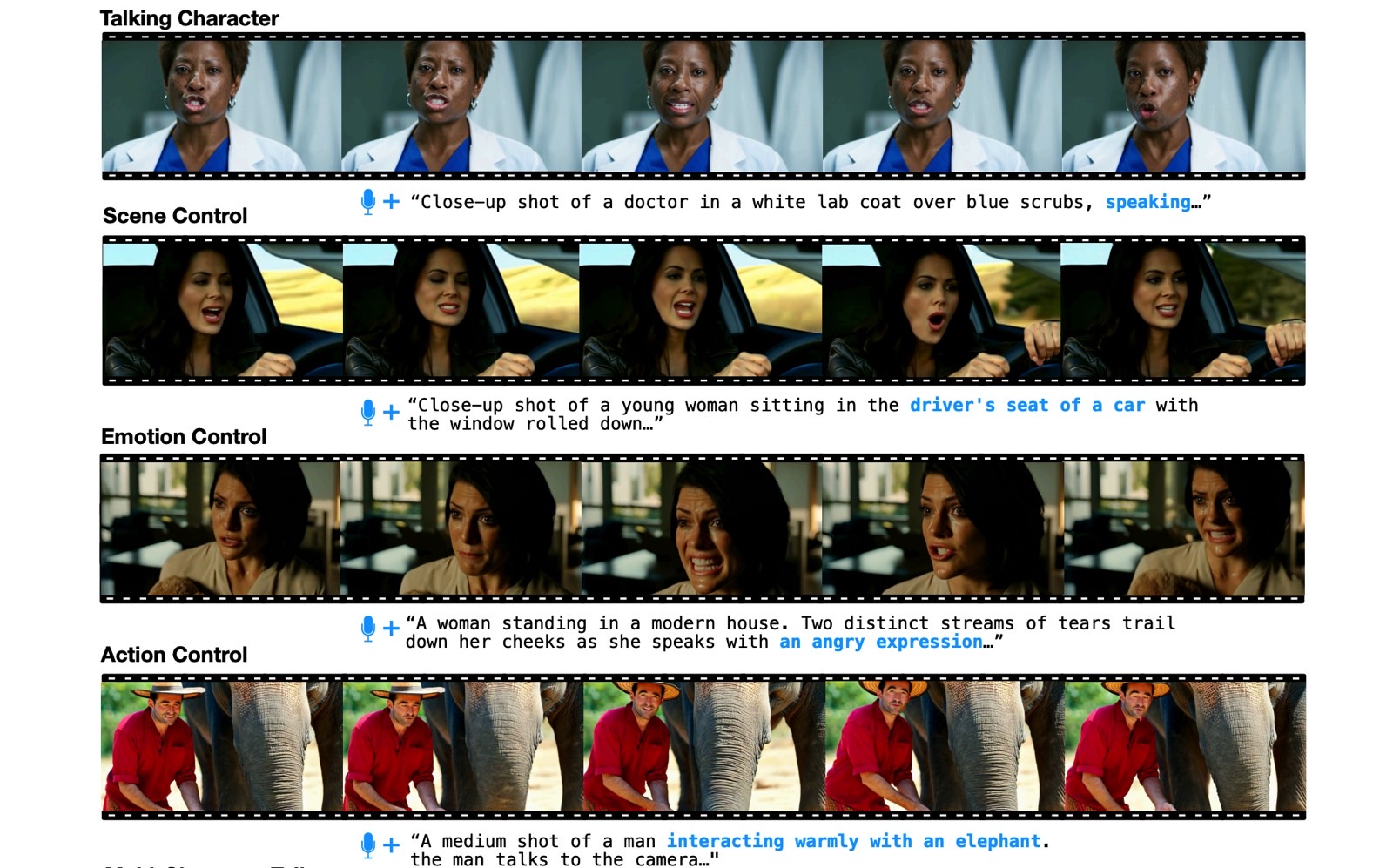

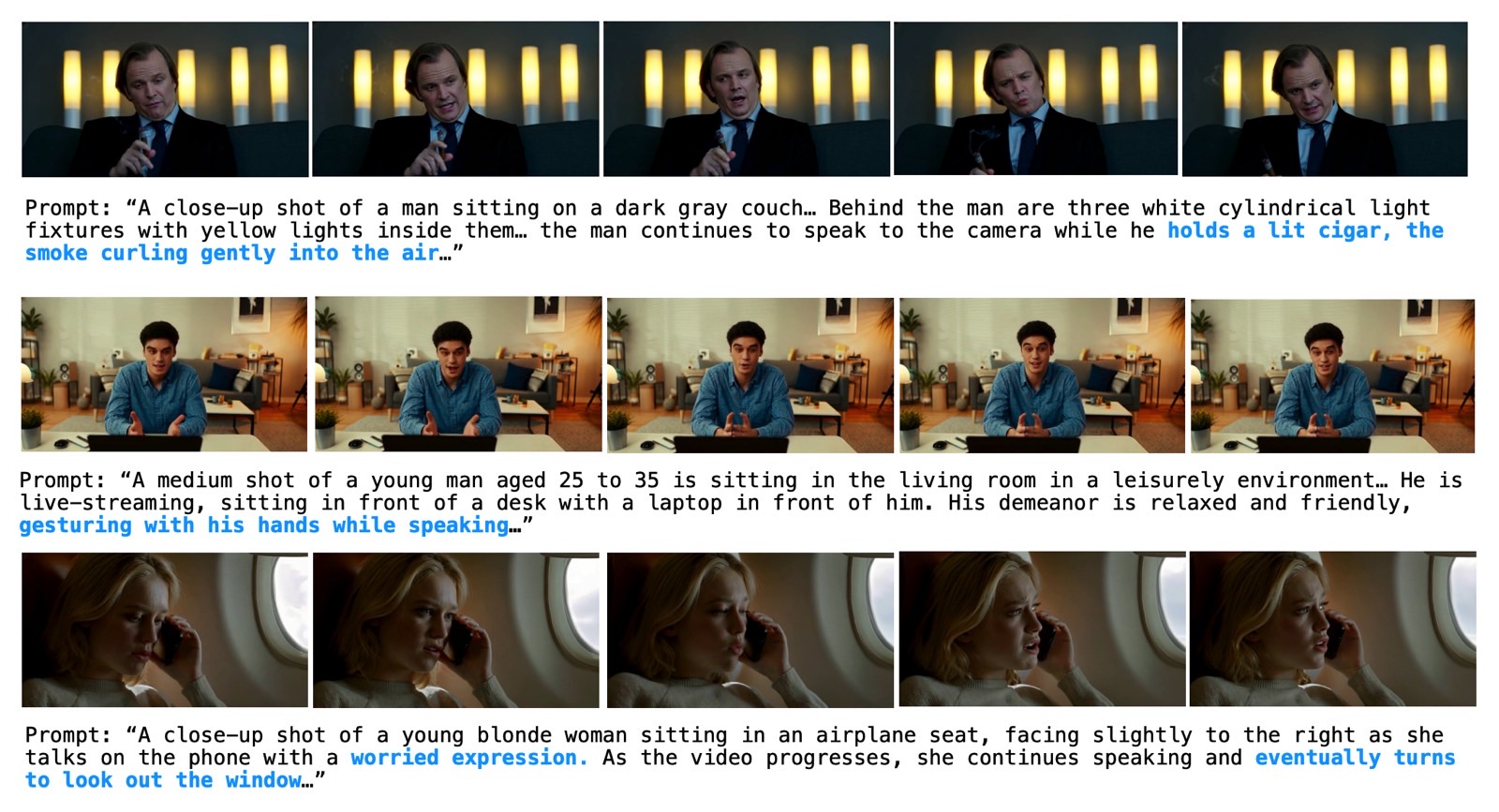

MoCha isn’t a sort of espresso spelled unsuitable. It’s quick for Film Character Animator, a analysis challenge from Meta and the College of Waterloo. The fundamental concept of the MoCha AI mannequin is fairly easy. You present the AI with a textual content immediate that describes the video and a speech pattern. The AI then places collectively a video that ensures the characters “communicate” the strains within the audio pattern nearly completely.

The researchers supplied loads of samples that present MoCha’s superior capabilities, and the outcomes are spectacular. Now we have all types of clips displaying live-action and animated protagonists talking the strains from the audio pattern. Mocha takes into consideration feelings, and the AI may assist a number of characters in the identical scene.

The outcomes are nearly excellent, however not fairly. There are some seen imperfections within the clips. The attention and face actions are giveaways that we’re taking a look at AI-generated video. Additionally, whereas the lip motion seems to be completely synchronized to the audio pattern, the motion of the complete mouth is exaggerated in comparison with actual folks.

I say that as somebody who has seen loads of comparable AI modes from different firms by now, together with some extremely convincing ones.

First, there’s the Runway Gen-4 that we talked about just a few days in the past. The Gen-4 demo clips are higher than MoCha. However that’s a product you need to use, MoCha can definitely be improved by the point it turns into a business AI mannequin.

Talking of AI fashions you possibly can’t use, I all the time evaluate new merchandise that may sync AI-generated characters to audio samples to Microsoft’s VASA-1 AI analysis challenge, which we noticed final April.

VASA-1 permits you to flip static photographs of actual folks into movies of talking characters so long as you present an audio pattern of any sort. Understandably, Microsoft by no means made the VASA-1 mannequin out there to shoppers, as such tech opens the door to abuse.

Lastly, there’s TikTok’s mother or father firm, ByteDance, which confirmed a VASA-1-like AI a few months in the past that does the identical factor. It turns a single picture into a completely animated video.

OmniHuman-1 additionally animates physique half actions, one thing I noticed in Meta’s MoCha demo as nicely. That’s how we bought to see Taylor Swift sing the Naruto theme tune in Japanese. Sure, it’s a deepfake clip; I’m attending to that.

Merchandise like VASA-1, OmniHuman-1, MoCha, and doubtless Runway Gen-4 is likely to be used to create deepfakes that may mislead.

Meta researchers engaged on MoCha and comparable initiatives ought to tackle this publicly if and when the mannequin turns into out there commercially.

You would possibly spot inconsistencies within the MoCha samples out there on-line, however watch these movies on a smartphone show, and they won’t be so evident. Take away your familiarity with AI video era; you would possibly suppose a few of these MoCha clips have been shot with actual cameras.

Additionally vital could be the disclosure of the info Meta used to coach this AI. The paper stated MoCha employed some 500,000 samples, amounting to 300 hours of high-quality speech video samples, with out saying the place they bought that knowledge. Sadly, that’s a theme within the trade, not acknowledging the supply of the info used to coach the AI, and it’s nonetheless a regarding one.

You’ll discover the total MoCha analysis paper at this hyperlink.