Immediately, we’re asserting Claude Opus 4.7 in Amazon Bedrock, Anthropic’s most clever Opus mannequin for advancing efficiency throughout coding, long-running brokers, {and professional} work.

Claude Opus 4.7 is powered by Amazon Bedrock’s subsequent technology inference engine, delivering enterprise-grade infrastructure for manufacturing workloads. Bedrock’s new inference engine has brand-new scheduling and scaling logic which dynamically allocates capability to requests, bettering availability notably for steady-state workloads whereas making room for quickly scaling companies. It offers zero operator entry—that means buyer prompts and responses are by no means seen to Anthropic or AWS operators—protecting delicate information non-public.

In line with Anthropic, Claude Opus 4.7 mannequin offers enhancements throughout the workflows that groups run in manufacturing akin to agentic coding, information work, visible understanding,long-running duties. Opus 4.7 works higher via ambiguity, is extra thorough in its downside fixing, and follows directions extra exactly.

- Agentic coding: The mannequin extends Opus 4.6’s lead in agentic coding, with stronger efficiency on long-horizon autonomy, methods engineering, and sophisticated code reasoning duties. In line with Anthropic, the mannequin information high-performance scores with 64.3% on SWE-bench Professional, 87.6% on SWE-bench Verified, and 69.4% on Terminal-Bench 2.0.

- Data work: The mannequin advances skilled information work, with stronger efficiency on doc creation, monetary evaluation, and multi-step analysis workflows. The mannequin causes via underspecified requests, making wise assumptions and stating them clearly, and self-verifies its output to enhance high quality on step one. In line with Anthropic, the mannequin reaches 64.4% on Finance Agent v1.1.

- Lengthy-running duties: The mannequin stays on observe over longer horizons, with stronger efficiency over its full 1M token context window because it causes via ambiguity and self-verifies its output.

- Imaginative and prescient: the mannequin provides high-resolution picture help, bettering accuracy on charts, dense paperwork, and display UIs the place positive element issues.

The mannequin is an improve from Opus 4.6 however might require prompting adjustments and harness tweaks to get probably the most out of the mannequin. To study extra, go to Anthropic’s prompting information.

Claude Opus 4.7 mannequin in motion

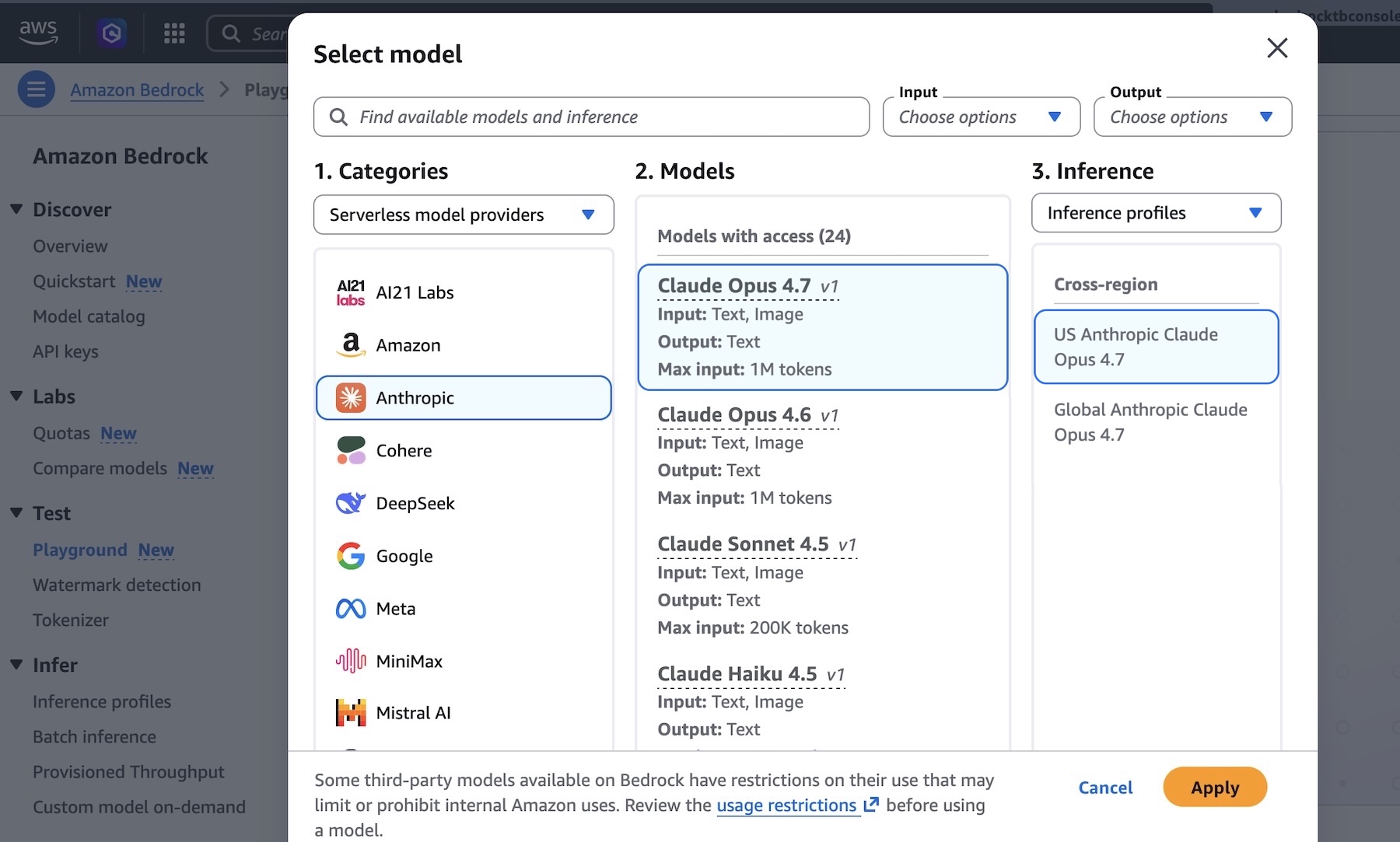

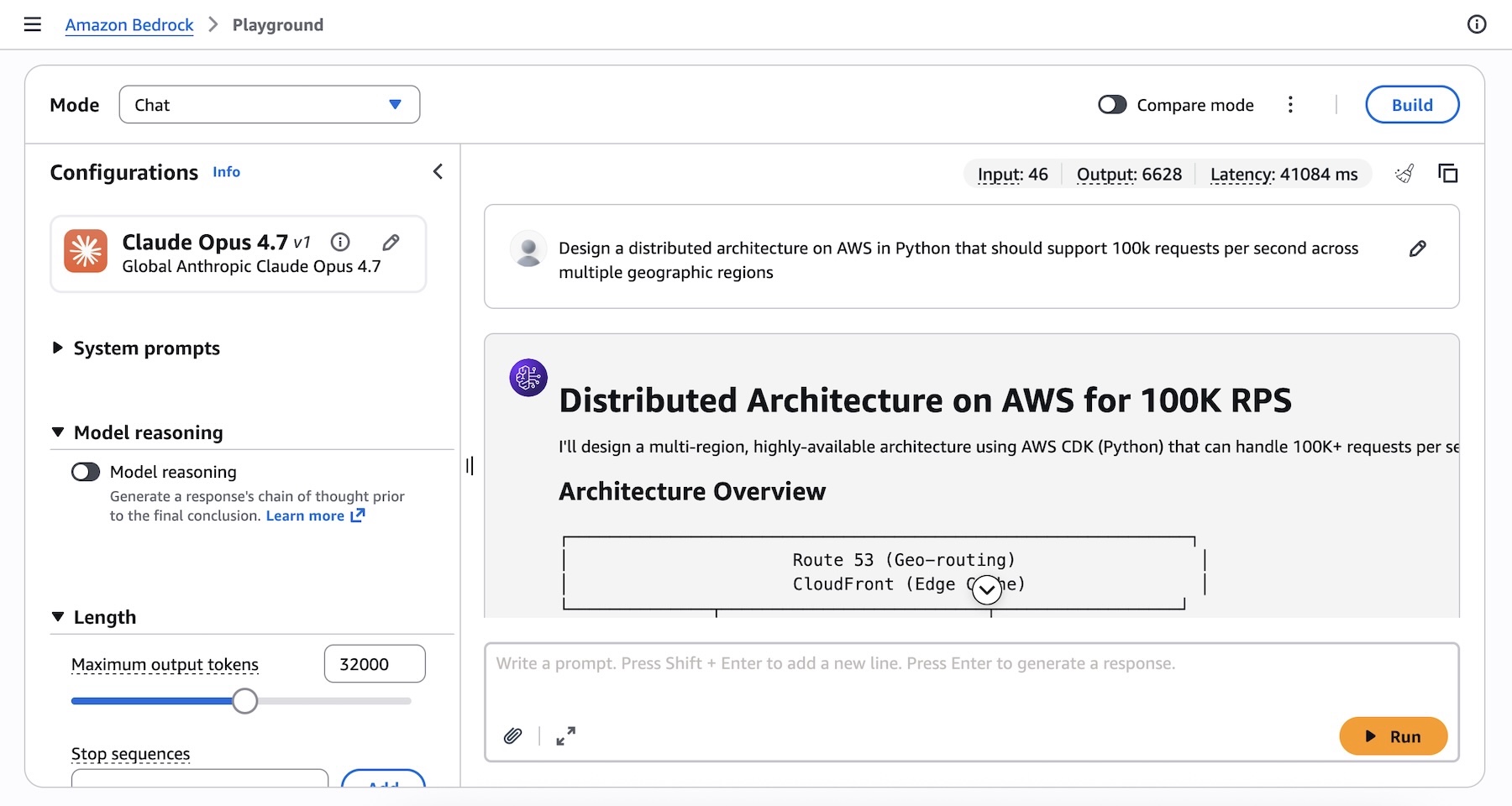

You will get began with Claude Opus 4.7 mannequin in Amazon Bedrock console. Select Playground below Check menu and select Claude Opus 4.7 when you choose mannequin. Now, you’ll be able to check your advanced coding immediate with the mannequin.

I run the next immediate instance about technical structure determination:

Design a distributed structure on AWS in Python that ought to help 100k requests per second throughout a number of geographic areas.

You may as well entry the mannequin programmatically utilizing the Anthropic Messages API to name the bedrock-runtime via Anthropic SDK or bedrock-mantle endpoints, or maintain utilizing the Invoke and Converse API on bedrock-runtime via the AWS Command Line Interface (AWS CLI) and AWS SDK.

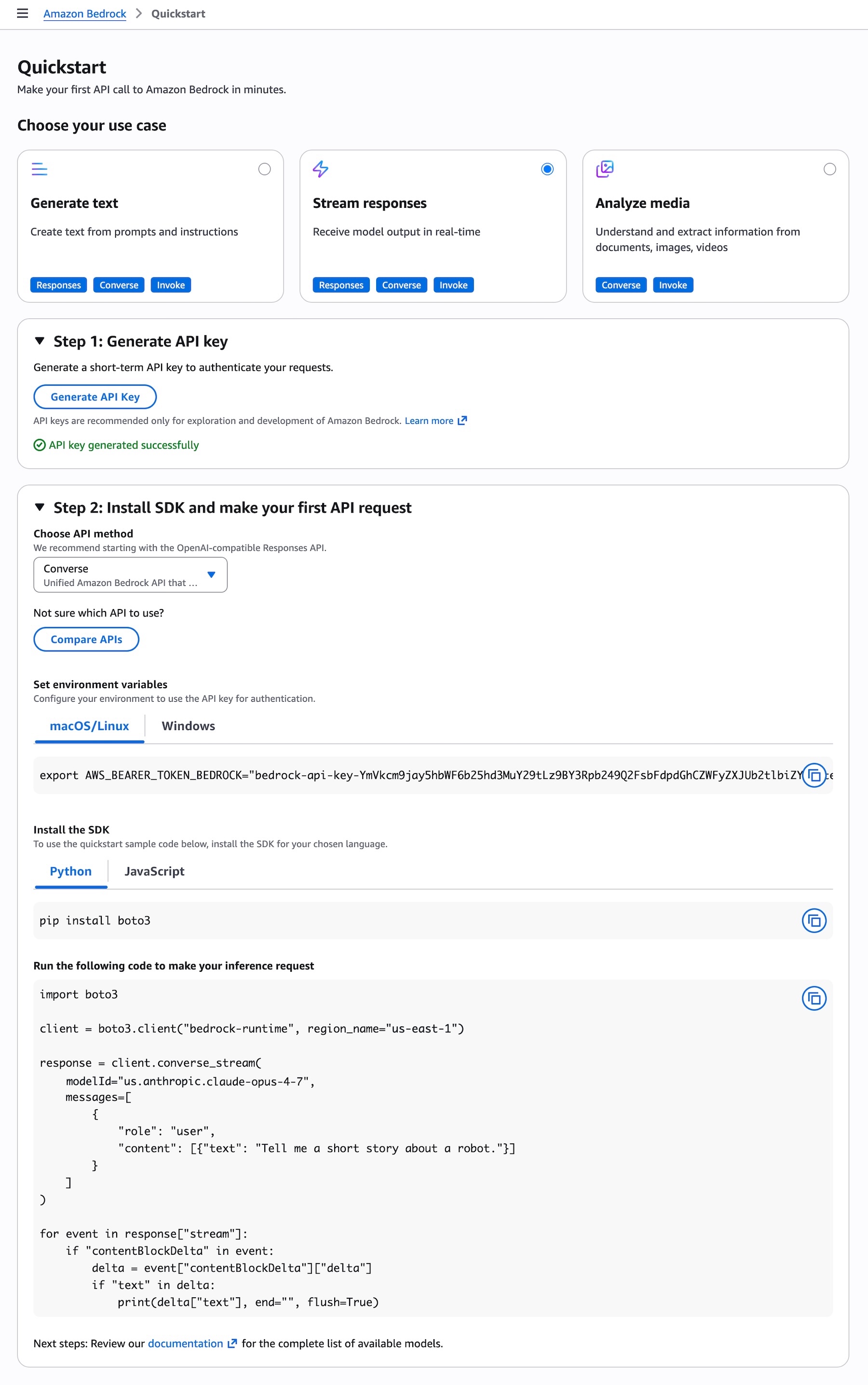

To get began with making your first API name to Amazon Bedrock in minutes, select Quickstart within the left navigation pane within the console. After selecting your use case, you’ll be able to generate a brief time period API key to authenticate your requests as testing function.

Whenever you select the API methodology such because the OpenAI-compatible Responses API, you may get pattern codes to run your immediate to make your inference request utilizing the mannequin.

To invoke the mannequin via the Anthropic Claude Messages API, you’ll be able to proceed as follows utilizing anthropic[bedrock] SDK package deal for a streamlined expertise:

from anthropic import AnthropicBedrockMantle

# Initialize the Bedrock Mantle shopper (makes use of SigV4 auth robotically)

mantle_client = AnthropicBedrockMantle(aws_region="us-east-1")

# Create a message utilizing the Messages API

message = mantle_client.messages.create(

mannequin="us.anthropic.claude-opus-4-7",

max_tokens=32000,

messages=[

{"role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions"}

]

)

print(message.content material[0].textual content)You may as well run the next command to invoke the mannequin on to bedrock-runtime endpoint utilizing the AWS CLI and the Invoke API:

aws bedrock-runtime invoke-model

--model-id us.anthropic.claude-opus-4-7

--region us-east-1

--body '{"anthropic_version":"bedrock-2023-05-31", "messages": [{"role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions."}], "max_tokens": 32000}'

--cli-binary-format raw-in-base64-out

invoke-model-output.txtFor extra clever reasoning functionality, you should use Adaptive pondering with Claude Opus 4.7, which lets Claude dynamically allocate pondering token budgets primarily based on the complexity of every request.

To study extra, go to the Anthropic Claude Messages API and take a look at code examples for a number of use instances and quite a lot of programming languages.

Issues to know

Let me share some essential technical particulars that I believe you’ll discover helpful.

- Selecting APIs: You’ll be able to select from quite a lot of Bedrock APIs for mannequin inference, in addition to the Anthropic Messages API. The Bedrock-native Converse API helps multi-turn conversations and Guardrails integration. The Invoke API offers direct mannequin invocation and lowest-level management.

- Scaling and capability: Bedrock’s new inference engine is designed to quickly provision and serve capability throughout many alternative fashions. When accepting requests, we prioritize protecting regular state workloads operating, and ramp utilization and capability quickly in response to adjustments in demand. In periods of excessive demand, requests are queued, quite than rejected. As much as 10,000 requests per minute (RPM) per account per Area can be found instantly, with extra out there upon request.

Now out there

Anthropic’s Claude Opus 4.7 mannequin is offered as we speak within the US East (N. Virginia), Asia Pacific (Tokyo), Europe (Eire), and Europe (Stockholm) Areas; verify the full record of Areas for future updates. To study extra, go to the Claude by Anthropic in Amazon Bedrock web page and the Amazon Bedrock pricing web page.

Give Anthropic’s Claude Opus 4.7 a attempt within the Amazon Bedrock console as we speak and ship suggestions to AWS re:Publish for Amazon Bedrock or via your common AWS Help contacts.

— Channy

Up to date on April 17, 2026 – We mounted code samples and CLI commends to align new model.