The AI infrastructure market isn’t transferring in a single path; it’s separating into two distinct worlds. On one facet are frontier coaching clusters, constructed to develop basis fashions at a large scale, tightly coupled to proprietary materials and a slender set of accelerators. The opposite is the quickly increasing actuality of enterprise inference, the place organizations deploy fashions to serve customers, course of dwell knowledge, and generate measurable enterprise worth. The Dell PowerEdge XE7740 is designed explicitly for this second world.

Key Takeaways

- The PowerEdge XE7740 is constructed for enterprise inference, with dual-zone thermals, structured PCIe Gen5 topology, and scale-out networking aligned to actual manufacturing workloads.

- System steadiness is intentional, combining Xeon 6 core density, excessive reminiscence bandwidth, and PCIe Gen 5 E3.S NVMe to help KV cache offload and orchestration.

- Silicon flexibility is foundational, supporting a broad vary of PCIe Gen5 accelerators with out forcing infrastructure redesign.

- The platform scales cleanly over time, from partial GPU inhabitants in a single chassis to distributed inference throughout racks utilizing eight rear Gen5 x16 devoted networking slots.

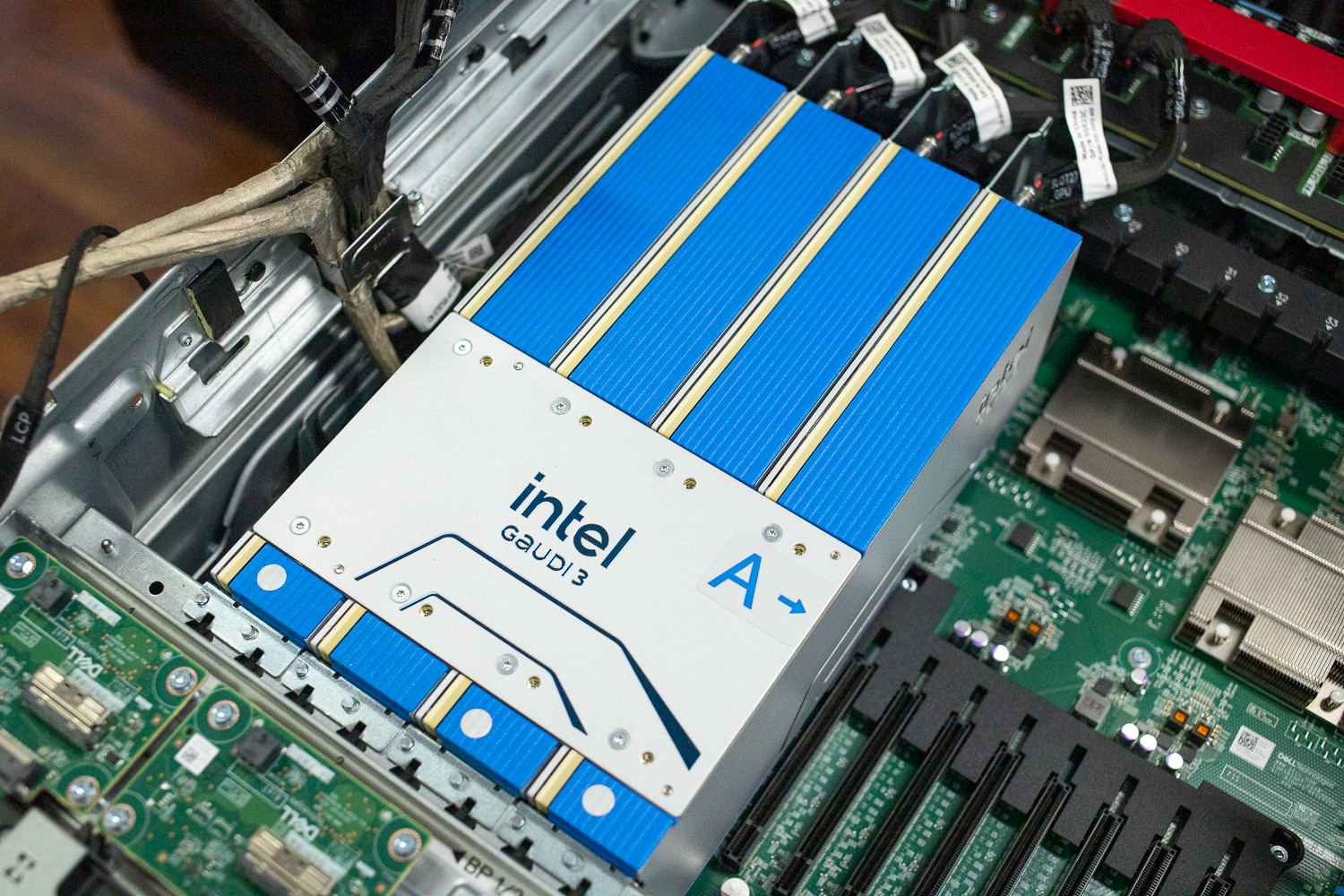

On the core of the XE7740 is a dedication to silicon variety. Reasonably than anchoring the platform to a single accelerator roadmap, Dell has constructed a system that adapts to availability, price, and organizational readiness. The XE7740 helps a spread of PCIe Gen5 accelerators, together with NVIDIA’s RTX PRO 6000, H100/200, L40S, L4, and A16 GPUs for organizations that worth broad ecosystem compatibility, and Intel Gaudi 3 for groups looking for a less expensive, available inference path. Gaudi 3 accelerators can be found as we speak, permitting organizations to maneuver from planning to deployment with out the procurement delays that always form accelerator technique.

As inference turns into the dominant AI workload, the supply and price construction matter. Most enterprises aren’t coaching frontier-scale fashions. They’re operating inference pipelines, serving mid-size language fashions, powering retrieval-augmented technology workflows, and deploying laptop imaginative and prescient in manufacturing. On this context, Gaudi 3 is positioned as some of the inexpensive fashionable inference accelerators available on the market, providing a up to date structure with high-bandwidth reminiscence and Ethernet-based scale-out with out the associated fee profile of flagship coaching GPUs. Throughout the XE7740, Gaudi 3 is much less about displacement and extra about enabling sustainable inference deployments.

The platform surrounding the accelerators is equally deliberate. The XE7740 is constructed on Intel Xeon 6 processors, and in inference-focused techniques, the CPU stays a essential element. Excessive core counts and elevated reminiscence bandwidth present the headroom required for schedulers, tokenization, preprocessing, and orchestration duties that sit instantly on the inference essential path. Entrance-mounted E3.S NVMe storage additional helps native knowledge staging and KV cache offload, decreasing accelerator load and bettering general system effectivity. This balanced design displays an understanding that inference efficiency is formed by your entire system, not by accelerators alone.

The XE7740 can be engineered to scale cleanly over time. Organizations can start with a modest configuration, corresponding to two or 4 accelerators, and extract speedy worth with out totally populating the chassis. As necessities develop, the identical platform can scale vertically or transition into distributed inference. Eight rear-facing PCIe Gen5 x16 slots ship devoted bandwidth for high-speed networking, enabling the XE7740 to function a constructing block for scale-out inference clusters. Elective DPU help extends this flexibility additional by offloading networking and communication duties as deployments mature.

Key Dell PowerEdge XE7740 Specs

| Specification | PowerEdge XE7740 |

|---|---|

| Options of PowerEdge XE7740 | |

| Processor | Two Intel® Xeon® 6 sequence processors, with as much as 86 cores per processor |

| Slots | |

| PCIe Accelerators | 8x PCIe Gen 5 x16 DW-FHFL as much as 600 W, or

16x PCIe Gen 5 x16 SW-FHFL as much as 75 W |

| PCIe NICs |

|

| Type issue | |

| Type issue | 4U rack server |

| Reminiscence | |

| DIMM pace, most capability | As much as 6400 MT/s, 4 TB max |

| Reminiscence module slots | 32 DDR5 DIMM slots Helps registered ECC DDR5 RDIMM solely. |

| Storage | |

| Entrance bays | As much as 8 x EDSFF E3.S Gen5 NVMe (SSD) max 122.88 TB |

| Storage controllers | |

| Inner boot | Boot Optimized Storage Subsystem (BOSS-N1 DC-MHS): HWRAID 1, 2 x M.2

NVMe SSDs |

| Energy provide | |

| Energy provide | 3200 W Titanium 200-240 V AC or 240 V DC, scorching swap redundant

Multi-capacity for 3200 W PSU:

Multi-capacity for 2400 W PSU:

CAUTION: The system requires a minimum of one PSU within the CPU zone and one PSU within the GPU zone to take care of BMC and standby energy. If the GPU zone has no PSU put in, then the system will stay on maintain. To make sure full redundancy, set up N+N PSUs in every zone: 1+1 within the CPU zone and three+3 within the GPU zone. Eradicating all PSUs from the CPU zone whereas the system is powered on will trigger a direct shutdown and will lead to knowledge loss. |

| Cooling Choices | |

| Cooling Choices | Air Cooling |

| Followers | As much as 4 units of high-performance (HPR) platinum-grade followers (twin fan module) put in within the mid tray

As much as twelve high-performance (HPR) platinum-grade followers put in on the entrance of the system All are hot-swap followers |

| Ports | |

| Community choices | 1 PCIe Gen 5 OCP 3.0 Appropriate I/O (supported by x8 PCIe lanes) |

| Entrance ports | 1 x USB 2.0 Sort-A (non-obligatory) 1 x Mini-Show port (non-obligatory) 1 x USB 2.0 Sort-C twin mode (Host/iDRAC Direct port) |

| Rear ports | 1 x Devoted iDRAC/BMC Direct Ethernet port 2 x USB 3.1 Sort A port 1 x VGA |

| Inner ports | 1 x USB 3.1 Sort-A |

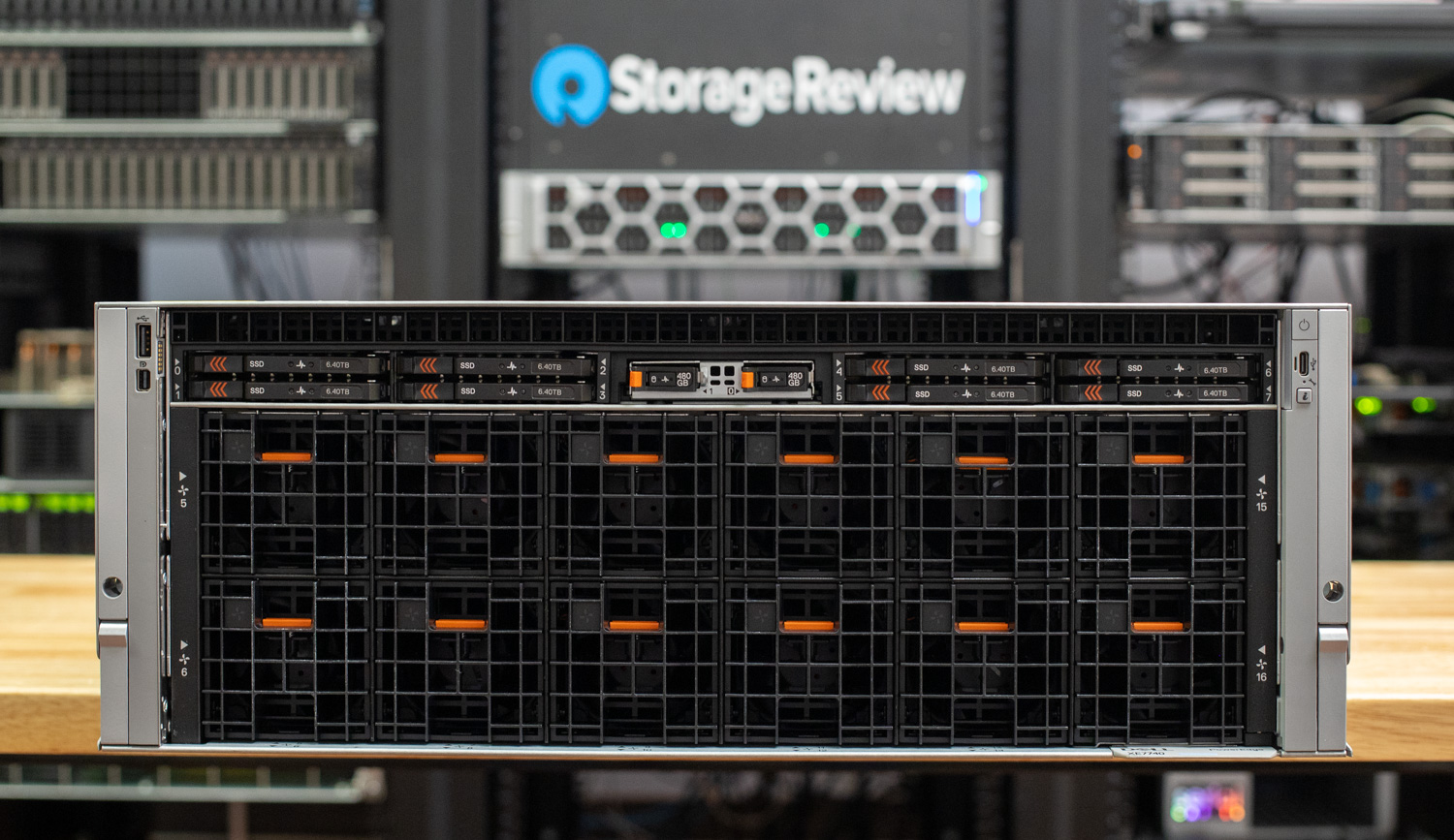

XE7740 Design and Construct

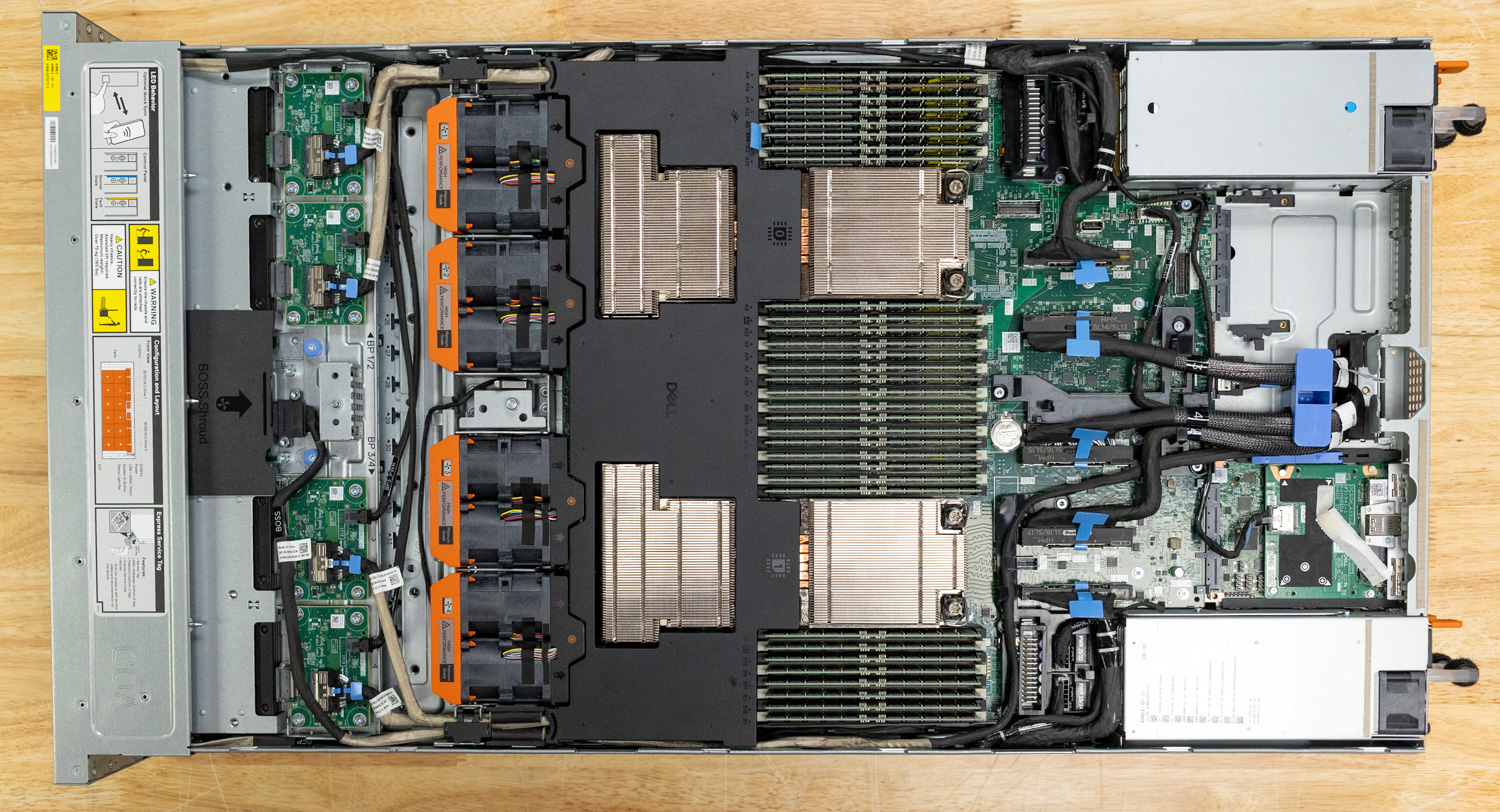

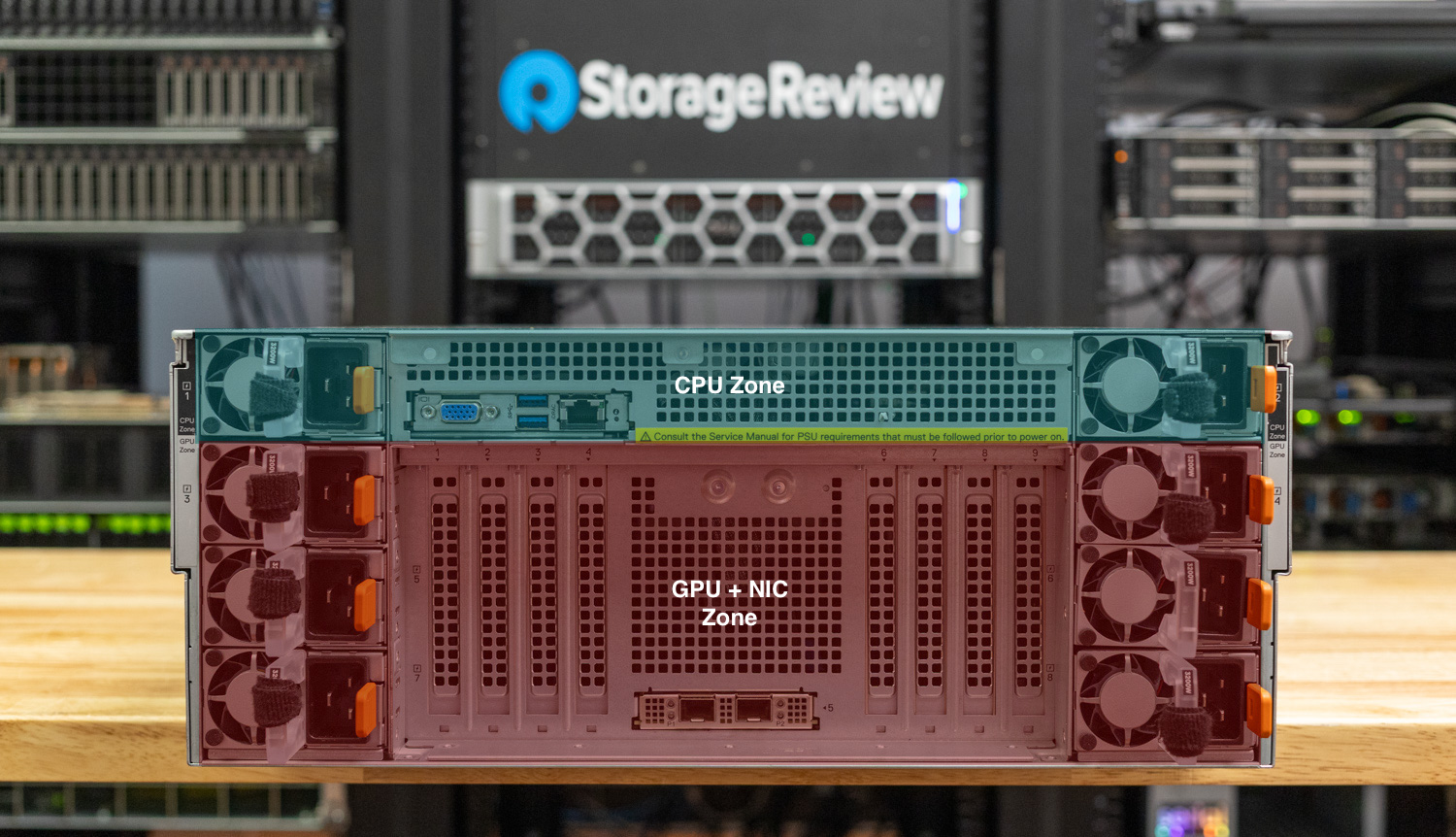

Twin-Zone Structure: CPU and GPU Separation

One of the distinctive design decisions within the XE7740 is its bodily separation into two distinct thermal and energy zones. The higher 1U part homes the CPU zone, comprising each Xeon 6 processors, all 32 DIMM slots, the storage, and the DC-SCM administration module. The CPU zone makes use of 4 units of high-performance dual-fan modules (40×40×56mm) that provide 47.4 CFM of airflow.

The decrease 3U part is the GPU zone, containing all accelerator slots with their very own devoted cooling infrastructure, alongside the PCIe Base Board (PBB), rear-facing PCIe growth slots, and OCP NIC connectivity. The GPU zone employs twelve bigger high-performance followers (60×60×56mm) with considerably larger airflow capability as much as 122.2 CFM per fan in comparison with the CPU followers. All followers are hot-swappable. This dual-zone cooling strategy signifies that the thermal calls for of high-TDP accelerators (as much as 600W per card) don’t compromise CPU and reminiscence cooling, and vice versa, in a 19-inch customary rack.

Dell has paid shut consideration to airflow optimization within the XE7740. Accelerator-dense techniques inherently require substantial inside cabling, together with GPU auxiliary energy leads, PCIe sign cables between the HPM board and the PBB, and fan board connections. Within the XE7740, these cables are routed alongside the facet partitions of the chassis utilizing devoted cable-holding brackets and cable-cover assemblies. Every cable is constructed to the precise size required; there’s no extra cable bundles contained in the system. By protecting cabling out of the central airflow channel, the design preserves a transparent front-to-rear airflow path and minimizes impedance throughout each the CPU and GPU cooling zones.

In a chassis housing as much as eight 600W accelerators, even small obstructions within the airflow path can create localized scorching spots and drive followers to run at larger speeds—rising each energy consumption and acoustic output. Dell’s cable administration strategy retains the middle of the chassis clear for direct, unobstructed airflow over the parts that want it most.

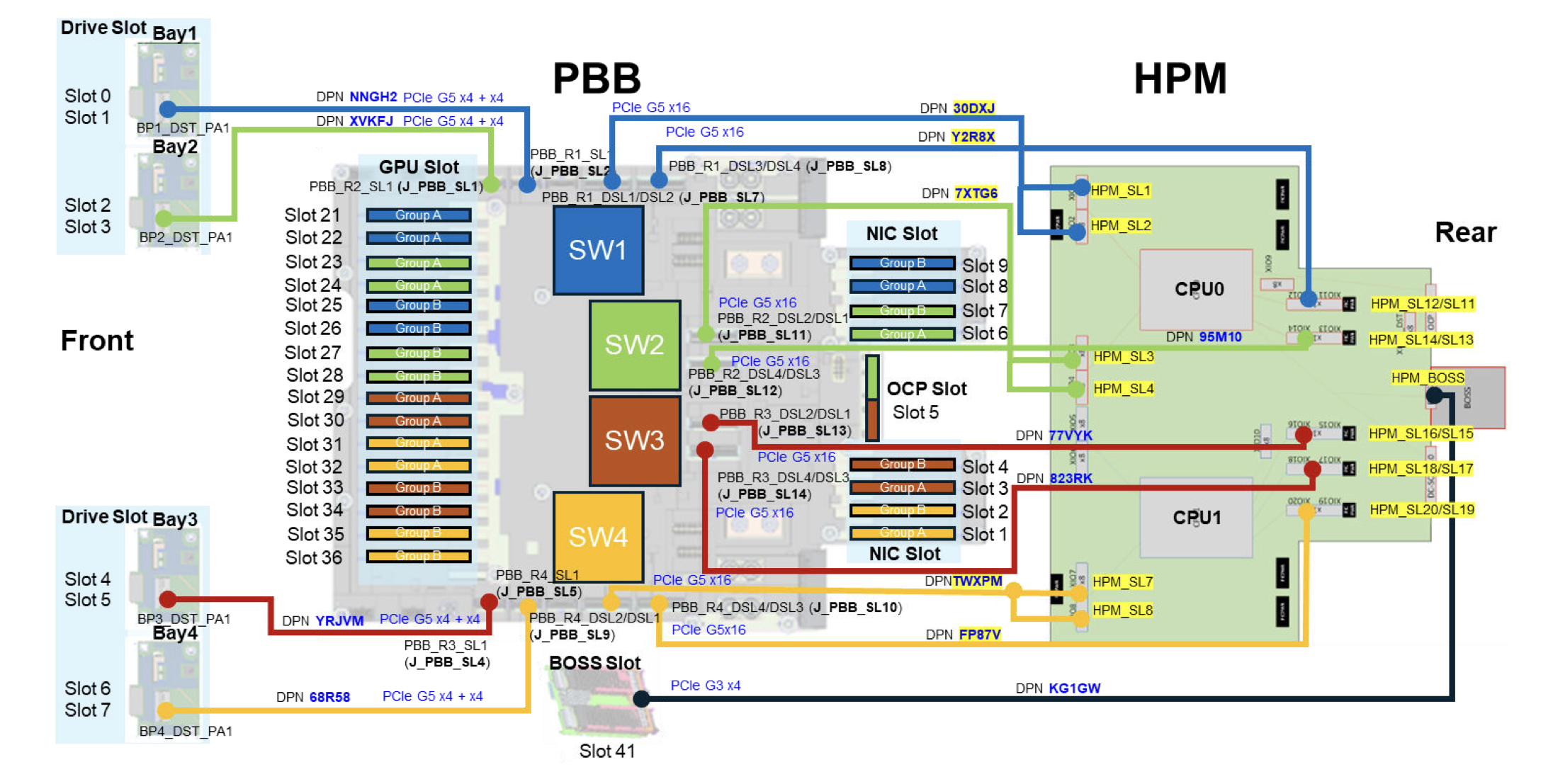

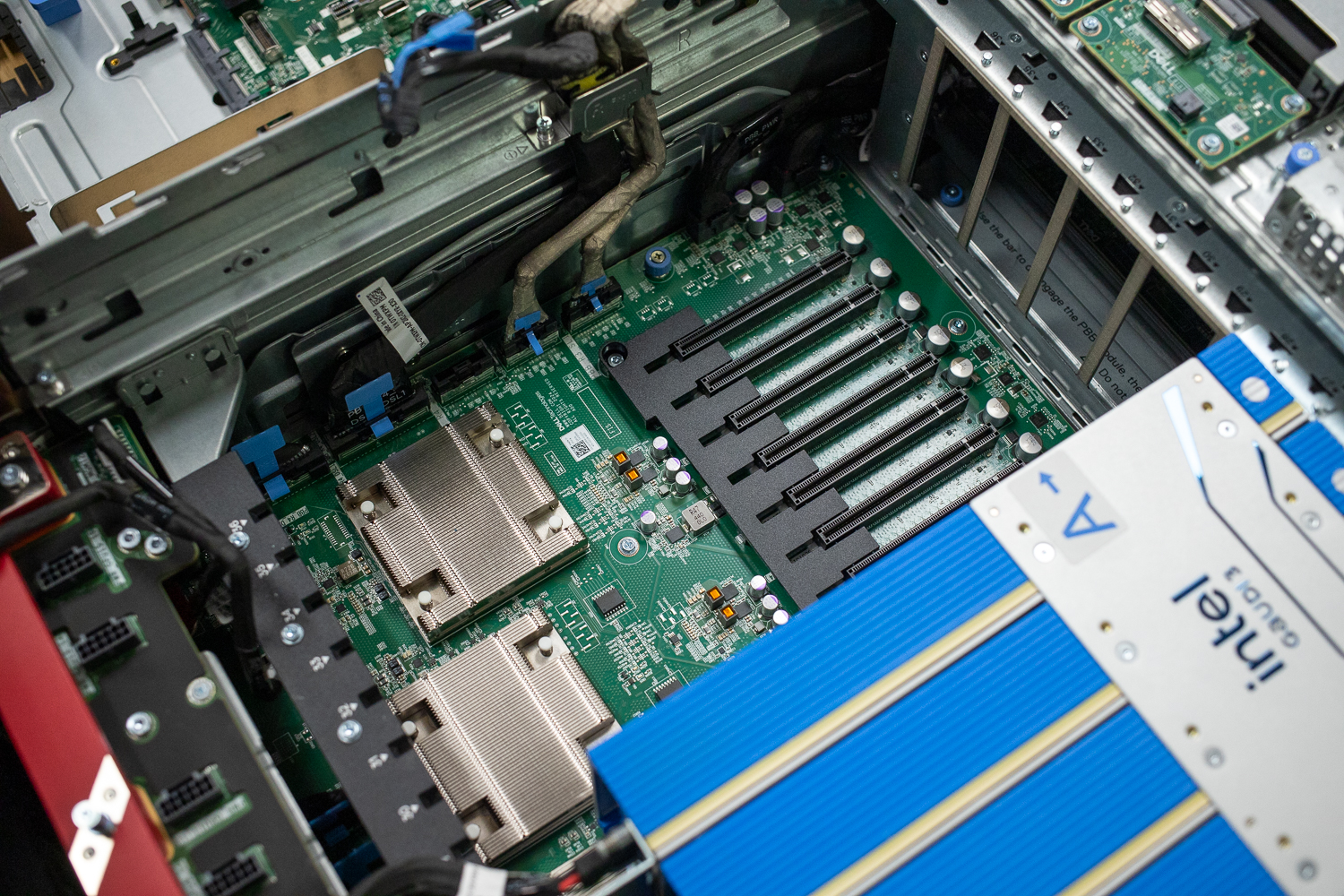

PCIe Change Topology and Knowledge Circulation

The XE7740’s PCIe subsystem is constructed round 4 PCIe Gen 5 switches on the PBB (PCIe Base Board), designated SW1 by way of SW4. These switches type the spine of the system’s I/O structure, connecting accelerators, networking, and storage to the 2 Xeon 6 processors in a fastidiously organized topology.

The 16 inside GPU slots are divided into two banks of eight, with every financial institution served by a pair of PCIe switches, every related upstream to a CPU. Inside every financial institution, pairs of adjoining double-width GPU slots interleave throughout the 2 switches. On the CPU0 facet, SW1 serves GPU slots 21 and 25, in addition to rear PCIe slots 8 and 9, whereas SW2 serves GPU slots 23 and 27, in addition to rear PCIe slots 6 and seven. On the CPU1 facet, SW3 serves GPU slots 29 and 33, in addition to rear PCIe slots 3 and 4, whereas SW4 serves GPU slots 31 and 35, in addition to rear PCIe slots 1 and a pair of.

Every CPU area, subsequently, owns 4 double-width accelerator slots, 4 rear-facing NIC/I/O slots, and 4 of the eight front-facing E3.S NVMe storage bays. This swap topology has vital implications for knowledge move, notably for RDMA-based site visitors. As a result of every swap hosts each accelerator and rear NIC slots, an accelerator and a community adapter on the identical swap can carry out RDMA transfers completely inside the swap cloth. The info by no means must traverse the CPU’s root advanced, eliminating the CPU bounce buffer that will in any other case be required when a PCIe machine on one root port communicates with a tool on one other. This reduces latency, avoids consuming valuable CPU reminiscence bandwidth, and frees CPU cycles for different work.

Communication inside a single CPU’s area between its two switches routes by way of the CPU root advanced however stays native to that socket. Cross-CPU communication, nonetheless, should traverse the UPI hyperlinks between the 2 Xeon 6 processors. The 6787P offers 4 UPI 2.0 hyperlinks at 24 GT/s every, providing substantial inter-socket bandwidth. Nonetheless, site visitors between an accelerator on CPU0’s swap financial institution and a NIC on CPU1’s swap financial institution will inherently carry larger latency than switch-local or same-socket transfers.

The switches themselves aren’t instantly interconnected. All cross-switch site visitors routes by way of the CPU root advanced, so understanding the affinity between GPU slots, NIC slots, storage, and CPU sockets is necessary. To simplify this complexity for organizations, Dell presents validated and optimized configurations for common accelerators.

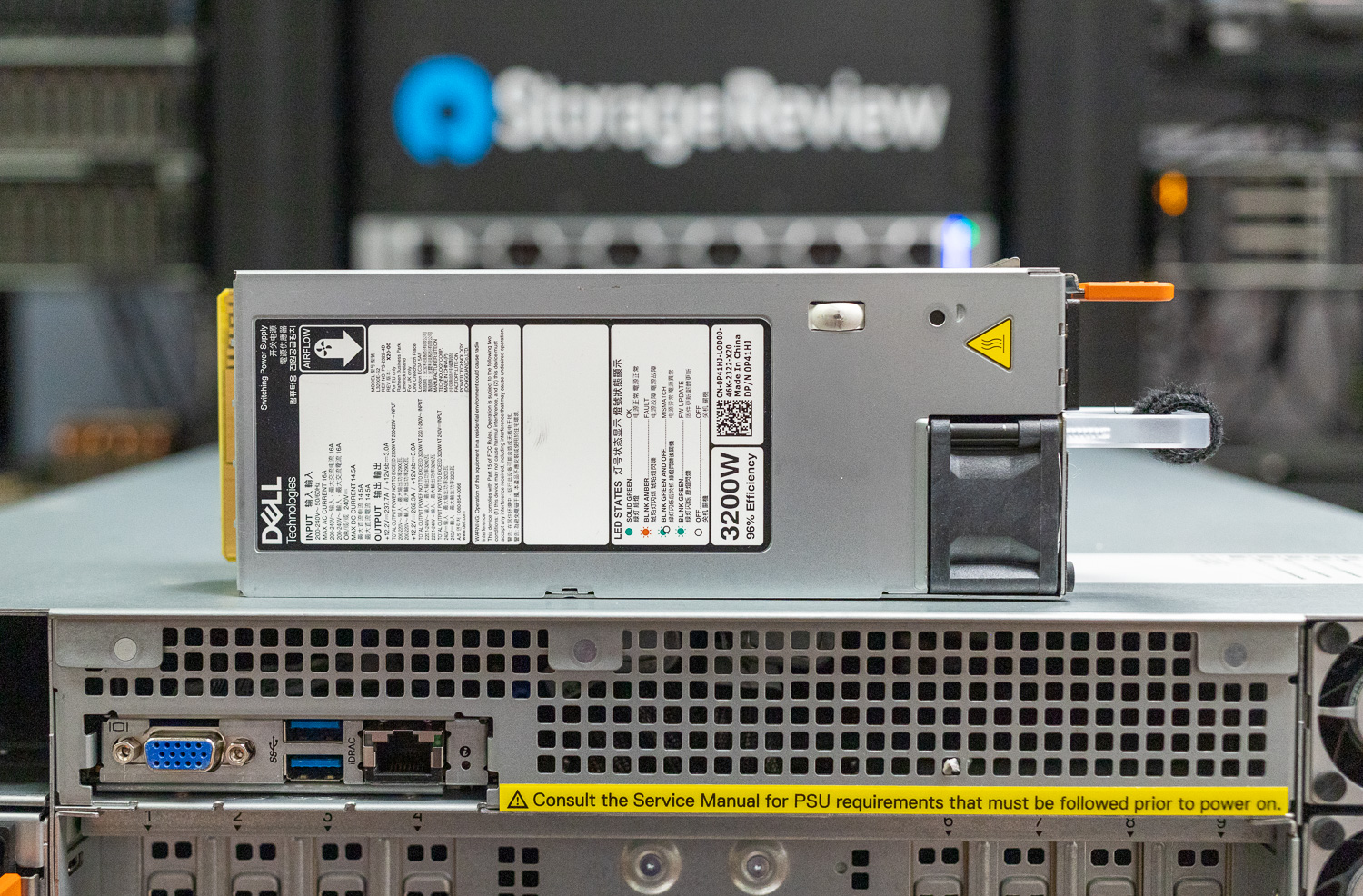

Twin-Zone Energy Provide

The XE7740’s energy supply mirrors its thermal structure with a considerably uncommon dual-zone PSU design. The system helps as much as eight hot-swappable energy provide items, divided throughout two zones: Zone 1 (CPU zone) holds PSUs 1 and a pair of, whereas Zone 2 (GPU zone) holds PSUs 3 by way of 8.

The system requires a minimum of one PSU in every zone to take care of BMC and standby energy. If both zone loses AC energy whereas the system is operating, the system instantly shuts down to forestall knowledge loss. The zones are interdependent for operation, although they’re bodily and electrically separated. For full redundancy, Dell recommends a 1+1 configuration within the CPU zone and a 3+3 configuration within the GPU zone, which means all eight PSU bays ought to be populated for a totally redundant deployment.

Dell AIOps, Administration, and Enterprise Reliability

Dell’s PowerEdge platform has earned a popularity within the business for reliability and serviceability. Enterprise clients persistently spotlight the identical themes: PowerEdge techniques are constructed to final, Dell’s help group resolves points shortly, and the administration tooling is mature and well-integrated. The XE7740 continues this custom, and Dell has made significant advances in each {hardware} administration and safety with this technology.

iDRAC 10

The XE7740 ships with Dell’s next-generation iDRAC 10, a considerable facelift over the already succesful iDRAC 9 that Dell clients have relied on for years. Carried out as a Knowledge Heart Safe Management Module (DC-SCM) in accordance with the OCP DC-MHS customary, iDRAC 10 isn’t merely a firmware replace; it represents new {hardware}. The controller options 4 1 GHz cores with a 64-bit structure and a pair of GB of DDR4 reminiscence (twice that of the earlier technology), delivering considerably improved efficiency and responsiveness for administration operations.

On the safety entrance, iDRAC 10 introduces a number of notable enhancements. The platform options stronger cryptographic help throughout the board, together with SHA-384 and SHA-512 authentication and quantum-safe AES-256 encryption, because the business prepares for post-quantum cryptographic threats. A devoted built-in safety enclave inside the iDRAC 10 silicon manages cyber resiliency features, together with device-level attestation and Dell’s customized Root-of-Belief. This hardware-based Root-of-Belief ensures that every one firmware (BIOS, iDRAC, and element firmware) is cryptographically verified earlier than execution, defending in opposition to provide chain assaults and firmware tampering.

Secured Element Verification validates that techniques delivered from Dell’s manufacturing facility arrive with the precise parts and configurations specified by the shopper, sustaining integrity from manufacturing by way of deployment. The most recent iDRAC 10 firmware additionally delivers a refreshed, modular consumer interface that improves the day-to-day administrative expertise.

OpenManage Enterprise

For fleet administration at scale, Dell’s OpenManage Enterprise offers centralized monitoring, firmware updates, and configuration administration throughout complete PowerEdge deployments. A notable latest addition for AI-focused deployments is that OME now helps direct visibility into GPU and accelerator statistics: energy consumption, temperature, utilization, error counts, and extra, with out requiring separate vendor-specific instruments. For organizations managing dozens or lots of of XE7740 nodes in an inference cluster, this unified administration airplane is a major operational simplification.

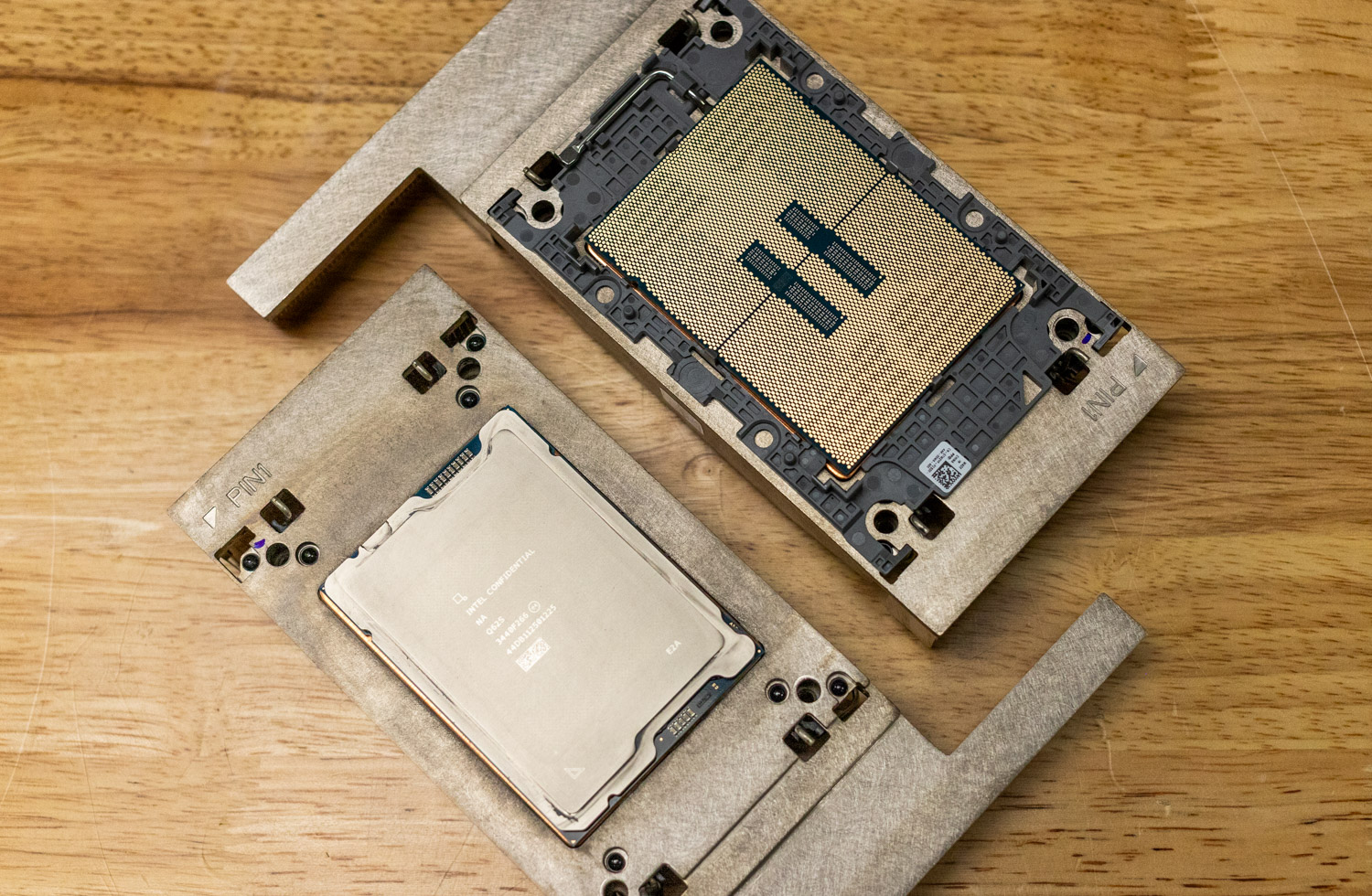

Intel Xeon 6

On the coronary heart of the XE7740 are two Intel Xeon 6 6787P processors, the flagship of the Xeon 6700P sequence. Constructed on the Granite Rapids structure utilizing Intel 3 nm expertise, the 6787P delivers 86 P-cores (172 threads) per socket at a 350W TDP, with a base clock of two.0 GHz and a turbo clock of three.8 GHz.

What makes Granite Rapids notably related for AI infrastructure is its mixture of excessive core counts and its reminiscence subsystem. Every 6787P processor offers eight DDR5 reminiscence channels at as much as 6400 MT/s. With a dual-socket XE7740 populated with 32 DIMMs, the system will be configured for as much as 4 TB of whole system reminiscence.

Reminiscence capability and bandwidth are essential for AI workloads, particularly when using KV cache offloading. As massive language fashions enhance context size, the KV cache scales proportionally and may devour a major quantity of accelerator reminiscence. Offloading parts of the KV cache to system reminiscence or quick storage permits the accelerator’s HBM for use extra effectively for energetic computation, decreasing time to first token (TTFT) for multi-turn chats.

It’s additionally value noting the Xeon 6’s AMX tensor items, which deal with vital work on the CPU facet. These embody preprocessing, tokenization, and hybrid inference duties that contain matrix ops. This turns into particularly helpful with inference frameworks like SGLang, which use the CPU for Radix Tree for KV Cache Administration and Zero Overhead scheduling.

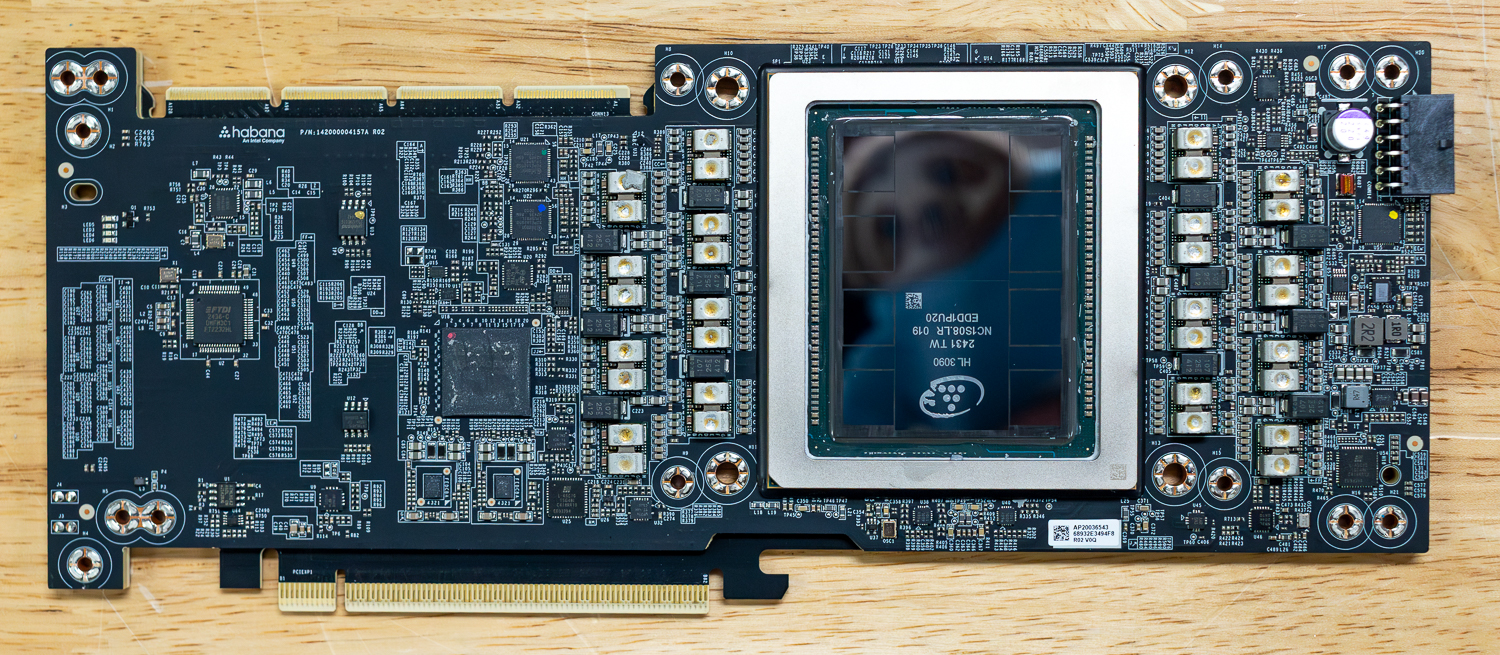

Intel Gaudi 3 Add-in Playing cards: Aggressive Inference at Scale

Intel’s Gaudi 3 is the Firm’s flagship AI accelerator, launched in This autumn 2024. Intel is positioning these accelerators very aggressively moderately than competing head-to-head with the highest-tier knowledge middle coaching accelerators. The Gaudi 3 is being aimed squarely on the inference phase.

Transformer-based mannequin inference in all common LLMs as we speak is basically memory-bound. Throughout the decode part of autoregressive technology, the mannequin generates tokens one after the other, studying the mannequin weights and KV cache entries for every token produced. The bottleneck isn’t compute capabilities however the reminiscence bandwidth, which is how shortly the accelerator can stream knowledge from HBM to the compute engines.

The Gaudi 3 options 128 GB of HBM2e with 3.7 TB/s of reminiscence bandwidth. Architecturally, the Gaudi 3 is constructed on TSMC’s 5nm course of and makes use of a dual-die chiplet design: two similar silicon dies joined by a high-bandwidth interconnect, presenting as a single unified machine to software program. Compute is organized into 4 Deep Studying Cores (DCOREs), every containing 2 MMEs, 16 TPCs, and 24 MB of native SRAM cache. The overall 96 MB of on-die SRAM offers 12.8 TB/s of inside bandwidth. The accelerator additionally integrates 14 devoted media decoders (H.265, H.264, JPEG, VP9), enabling quick imaginative and prescient preprocessing for multi-modal workloads.

A big chunk of the frontier open-source AI fashions being launched as we speak are both natively FP8-trained or hybrid fashions that blend FP8 (E4M3) and BF16 weights. The Gaudi 3 offers native FP8 acceleration for these throughout its 8 Matrix Multiplication Engines and 64 Tensor Processor Cores, delivering 1.8 PFlops of FP8 compute.

The Gaudi 3 additionally integrates RDMA over Converged Ethernet (RoCEv2) networking with 24×200 GbE ports on the OAM model, constructed instantly into the silicon. Whereas the PCIe add-in card variant used within the XE7740 doesn’t expose all of those ports in the identical approach, the add-in card variants help bridging 4 playing cards for sooner communication between them.

Efficiency and Benchmarks

XE7740 configuration particulars:

- 2 x Intel Xeon 6787P Processor (86-Cores, 2.00 GHz)

- 2TB DDR5 (32 x 64GB 5200MT/s DDR5

- 4 x Intel Gaudi 3 PCIe AI Accelerator w/ 128GB of HBM

- Ubuntu 24.04.5 Server

vLLM On-line Serving Efficiency

To judge the inference capabilities of the Dell XE7740 powered by Intel Gaudi 3 accelerators, we benchmarked vLLM on-line serving efficiency throughout a spread of common fashions spanning completely different architectures, parameter counts, and precision codecs. Every mannequin was examined throughout three workload profiles with concurrent request counts scaling from 1 to 128.

LLM inference consists of two distinct phases. The prefill part processes all enter tokens in parallel earlier than any output token will be generated, making it a compute-bound operation that scales linearly with the variety of enter tokens. The decode part then generates output tokens one after the other (autoregressively), the place every new token requires studying the total mannequin weights from reminiscence, however performs comparatively little per-token computation—making it memory-bandwidth certain.

These two phases stress basically completely different elements of the accelerator, so we check three workload profiles that shift the steadiness between them:

- Equal (1024 enter/1024 output tokens) represents balanced chat interactions.

- Prefill Heavy (8192 enter/1024 output) simulates retrieval-augmented technology or long-context summarization, through which the system should course of massive enter contexts.

- Decode Heavy (1024 enter/8192 output) represents long-form content material technology the place sustained reminiscence bandwidth determines throughput.

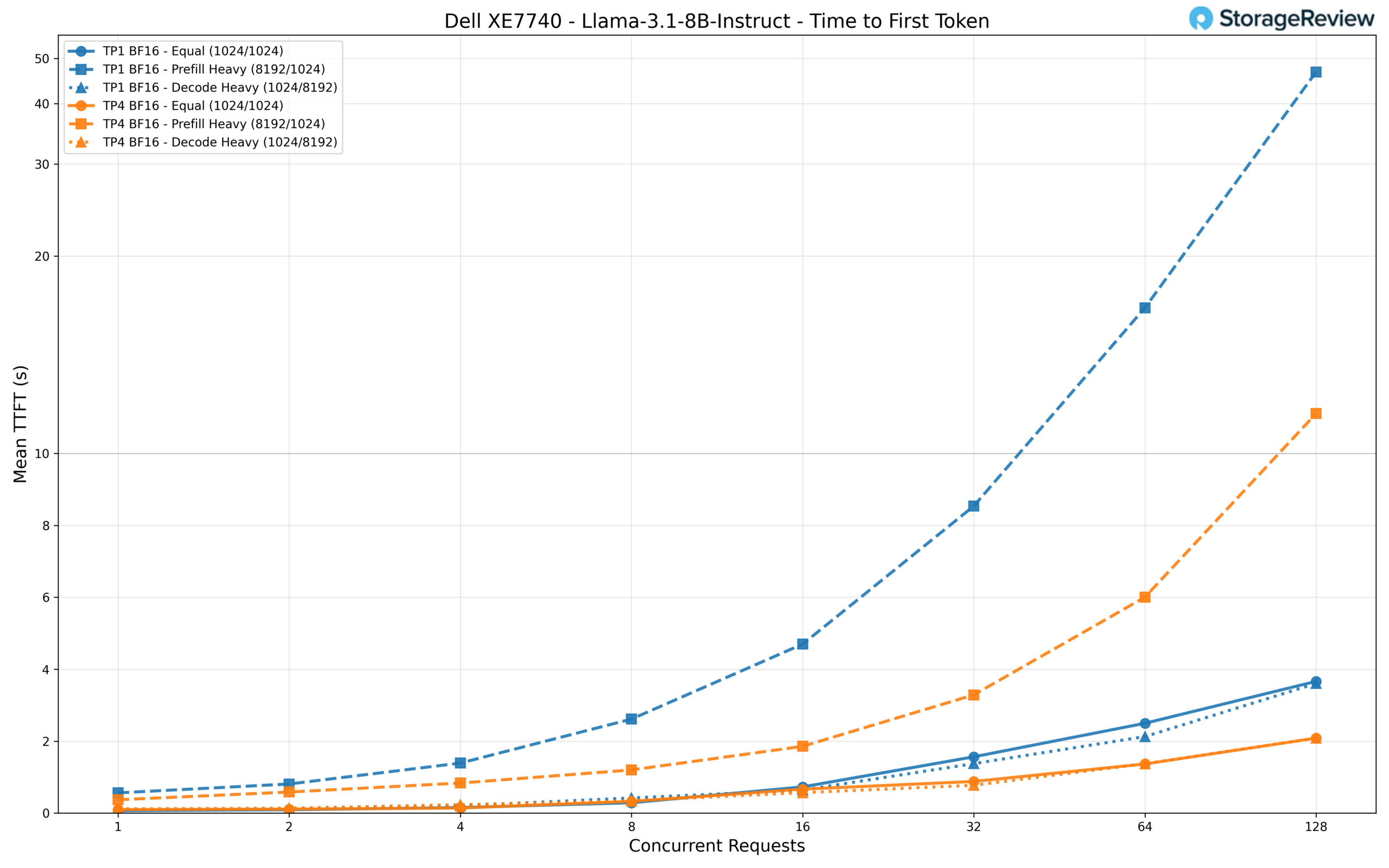

We deal with two main metrics all through this part. Whole token throughput, measured in tokens per second, captures the system’s general serving capability below load. Time to first token (TTFT) measures the delay between submitting a request and receiving the primary generated token. As a result of the mannequin should full your entire prefill part earlier than it might emit the primary token, TTFT is instantly tied to the accelerator’s compute throughput. This makes the prefill-heavy situation (mixed with TTFT) a very helpful proxy for understanding the uncooked compute capabilities of the Gaudi 3 accelerators, because the system should course of all 8,192 enter tokens earlier than the consumer sees any response.

Conversely, the decode-heavy situation assessments the accelerators’ reminiscence bandwidth, because the system should maintain excessive throughput for hundreds of generated tokens. TTFT is essential for interactive purposes through which customers look forward to a response earlier than streaming can start. A system can obtain wonderful throughput below heavy batching however nonetheless really feel sluggish if TTFT rises too excessive, so each metrics matter for manufacturing deployments.

A notice on FP8 precision outcomes: whereas Intel Gaudi 3 accelerators embody native FP8 compute acceleration (and FP8 ought to, in principle, ship larger throughput than BF16), the FP8 efficiency numbers in our benchmarks are decrease than their BF16 counterparts. This isn’t a {hardware} limitation however moderately a software program maturity difficulty inside Intel’s fork of vLLM. The model we examined (vLLM installer 2.7.1 on Gaudi Docker 1.22.2) has not but totally optimized its FP8 code paths. Intel has a brand new plugin-based model of vLLM at present in beta which will tackle many of those efficiency challenges.

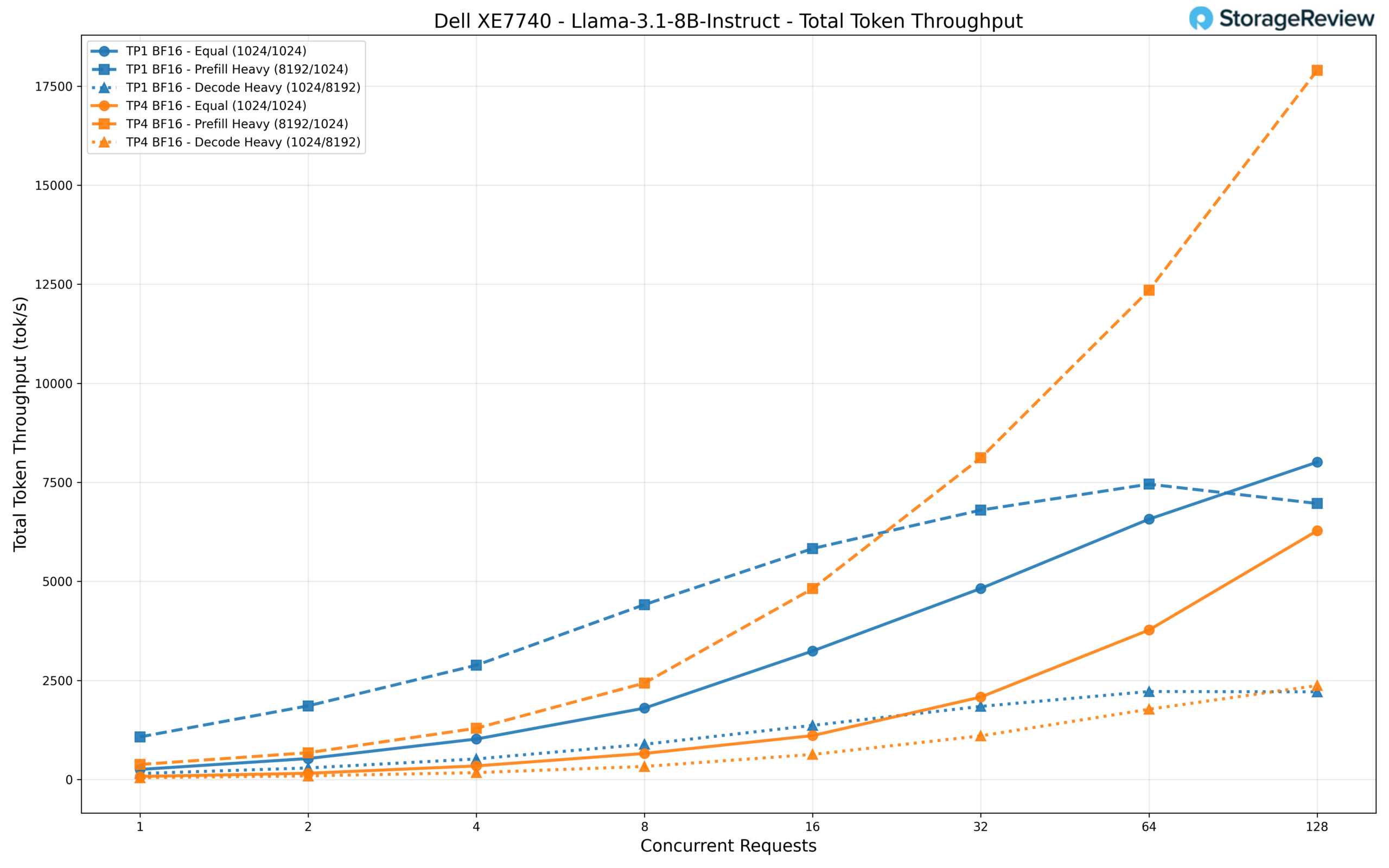

Llama 3.1 8B Instruct

Llama 3.1 8B Instruct is a dense transformer mannequin from Meta, which means each parameter is energetic for each token generated. With 8 billion parameters, it’s among the many extra open-source fashions. Fashions on this dimension class are common for on a regular basis duties corresponding to summarizing brief paperwork, drafting emails and messages, answering easy questions, and powering easy chatbot interactions the place pace and price effectivity matter greater than deep reasoning.

We examined this mannequin in each TP1 (single accelerator) and TP4 (all 4 Gaudi 3 accelerators) configurations. Operating on TP1, the mannequin achieves roughly 8,000 tok/s whole throughput at 128 concurrent requests within the equal workload, scaling cleanly from round 250 tok/s at a single request. The prefill-heavy situation exhibits an attention-grabbing sample: whereas TP1 peaks at round 7,000 tok/s, TP4 surges to over 17,900 tok/s at 128 concurrent requests, leveraging extra accelerators to course of the big enter context extra effectively.

For single-user latency, TP1 really delivers decrease TTFT at low concurrency (67ms versus 98ms for TP4), reflecting the overhead of coordinating throughout 4 accelerators for a mannequin that matches comfortably on one. Because the load will increase, nonetheless, TP4 pulls forward decisively. At 128 concurrent requests, TP4 holds TTFT to round 2 seconds for equal and decode workloads, whereas TP1 climbs to three.7 seconds and 6.6 seconds, respectively. The prefill-heavy situation is the place the hole turns into most dramatic: TP1 reaches almost 47 seconds of TTFT at 128 requests, whereas TP4 retains it to round 11 seconds.

Llama 3.1 70B Instruct

Llama 3.1 70B Instruct is the bigger dense mannequin in Meta’s Llama 3.1 household. With 70B parameters, it delivers considerably higher instruction-following and multilingual capabilities than the 8B variant. Fashions at this scale are well-suited for extra intensive agentic workloads, corresponding to buyer help brokers, multi-step analysis assistants, advanced doc evaluation, and duties that require sustaining coherent context over longer interactions.

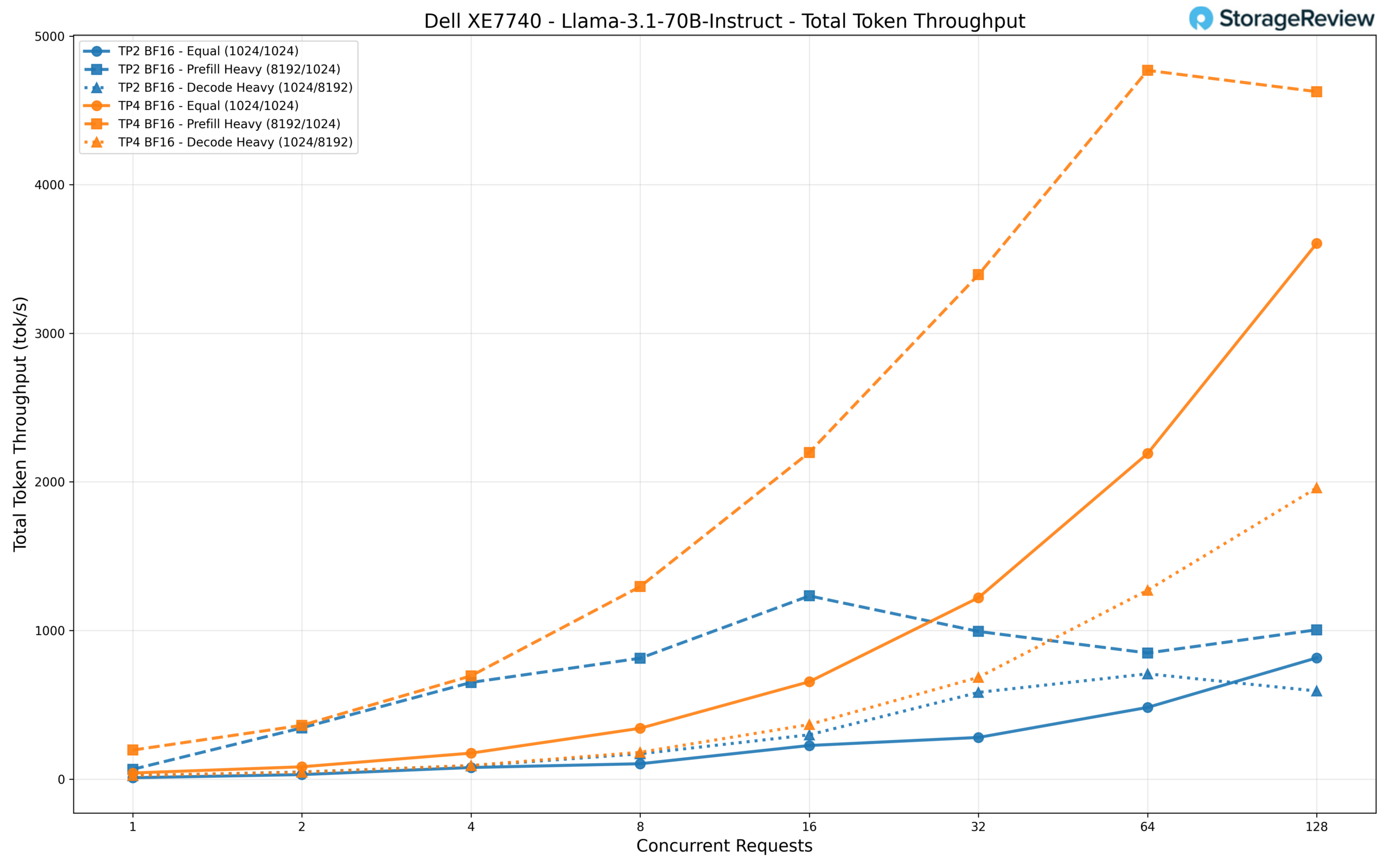

We examined this mannequin with TP2 and TP4 configurations. The throughput distinction between the 2 is substantial. At 128 concurrent requests, TP4 delivers roughly 3,600 tok/s within the equal workload and peaks close to 4,600 tok/s within the prefill-heavy situation. That is roughly 4.4x and 4.6x the throughput of TP2, which maxes out round 816 tok/s and 1,005 tok/s, respectively. Even the decode-heavy workload reaches about 1,960 tok/s on TP4, in contrast with 593 tok/s on TP2.

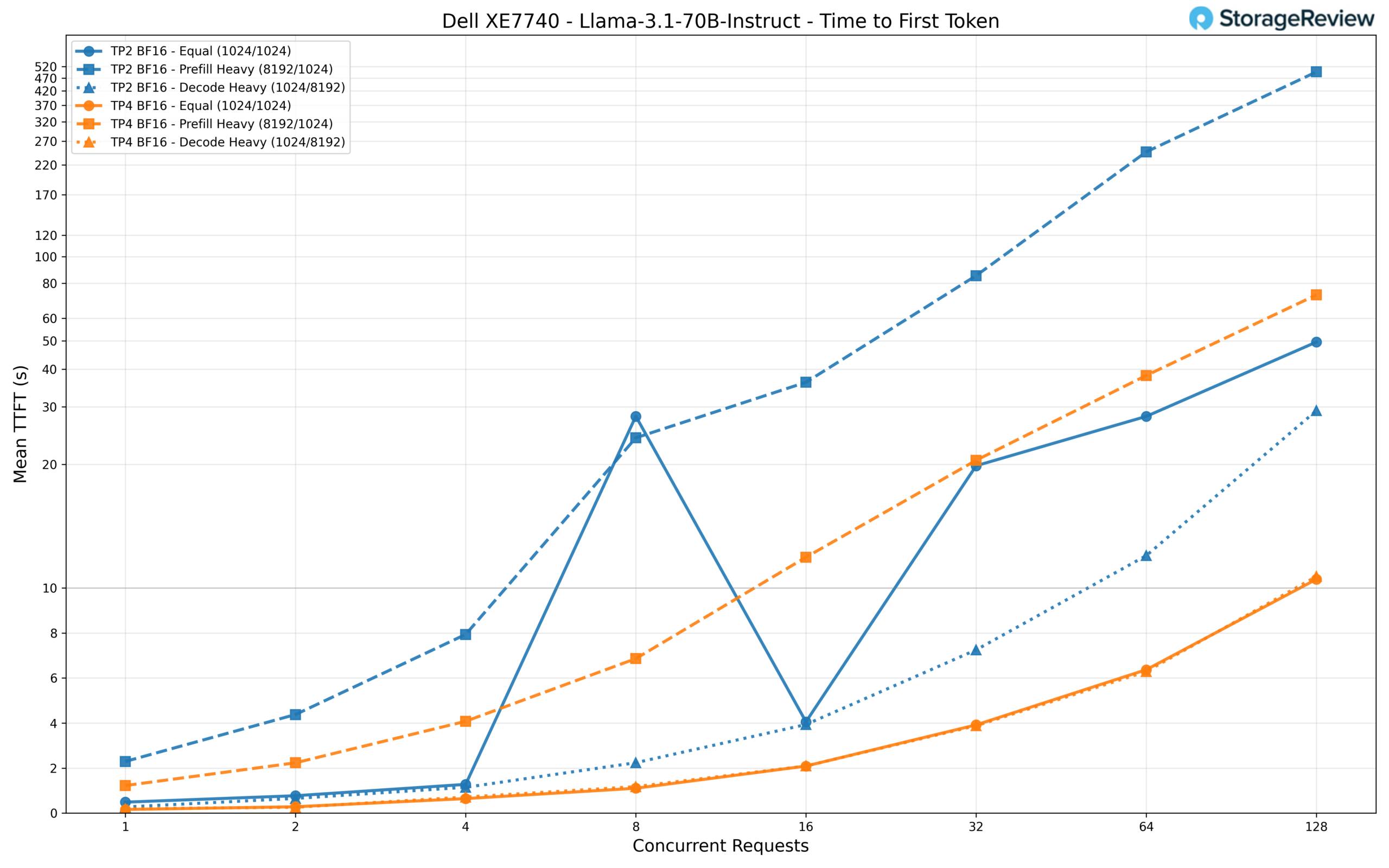

For TTFT, TP2 struggles below load with the prefill-heavy workload, climbing to a staggering 496 seconds at 128 concurrent requests, making it primarily unusable for interactive purposes. TP4 brings this all the way down to round 73 seconds. For the equal and decode workloads, TP4 holds TTFT to roughly 10 seconds at 128 requests, whereas TP2 reaches 50 and 29 seconds, respectively. At low concurrency, TP4 delivers first-token responses in about 160ms for the equal workload, in comparison with 486ms on TP2.

Qwen3 Coder 30B-A3B Instruct

Qwen3 Coder 30B-A3B is without doubt one of the hottest coding fashions for native inference deployments and makes use of a Combination-of-Consultants (MoE) structure. In contrast to dense fashions, the place each parameter participates in each ahead go, MoE fashions route every token by way of a small subset of specialised knowledgeable networks. The Qwen3 Coder maintains a full mannequin dimension of 30B parameters at BF16 precision whereas activating solely 3B parameters per generated token. This sparse activation sample means the mannequin can ship the standard of a a lot bigger community whereas requiring solely a fraction of the compute per token, making it extraordinarily environment friendly on {hardware} that helps the routing overhead. For finish customers, this mannequin is well-suited to on a regular basis coding help, corresponding to producing boilerplate, finishing features, explaining code, writing unit assessments, and dealing with routine growth duties that profit from a code-specialized mannequin with out requiring heavyweight reasoning.

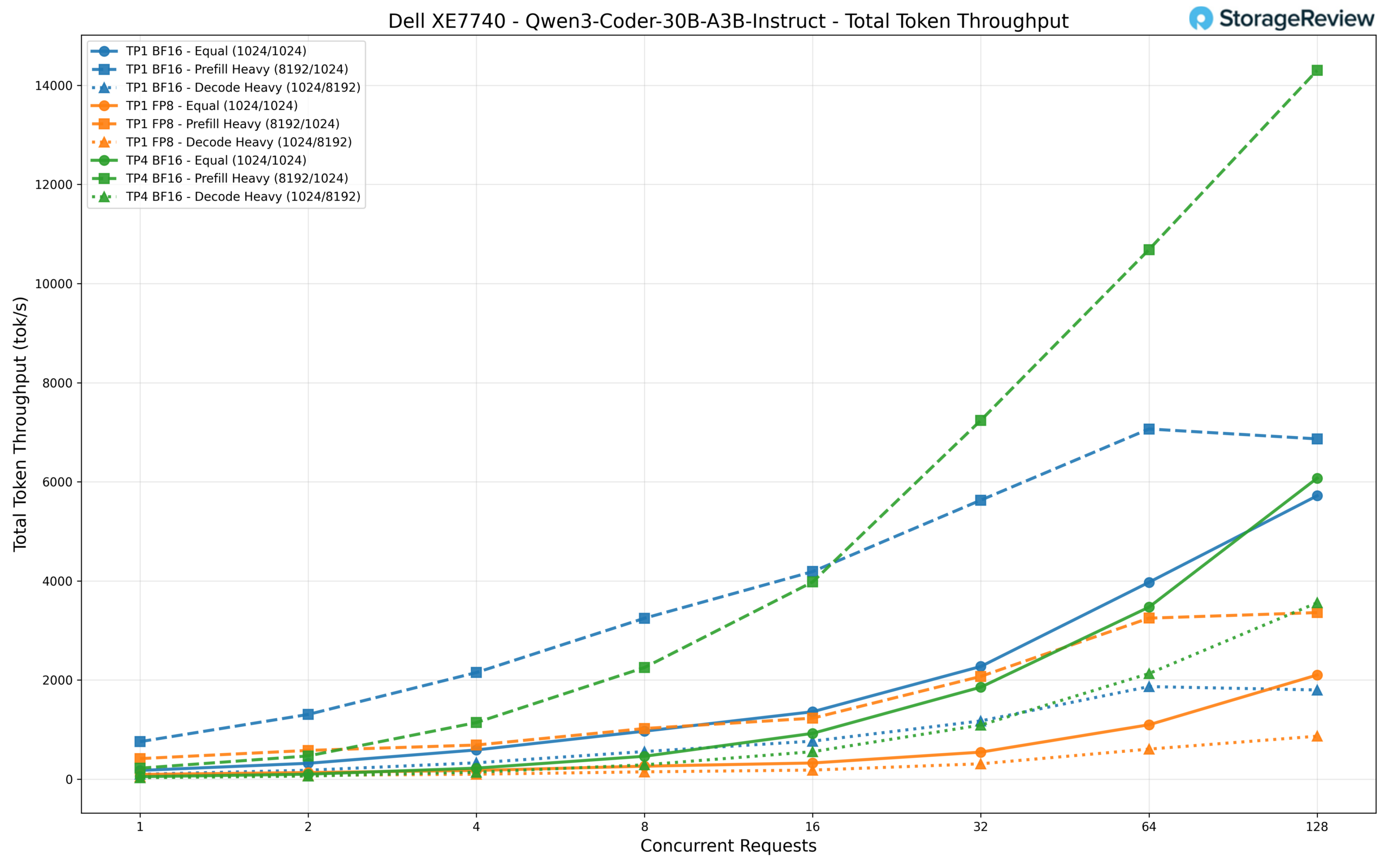

We examined three configurations: TP1 BF16, TP1 FP8, and TP4 BF16. At 128 concurrent requests, TP4 BF16 leads the pack with roughly 14,300 tok/s within the prefill-heavy situation, the best throughput determine we recorded throughout any mannequin in our check suite. TP1 BF16 follows with about 6,900 tok/s in the identical situation, whereas TP1 FP8 trails at round 3,360 tok/s. Within the equal workload, the hole narrows considerably with TP4 at 6,073 tok/s, TP1 BF16 at 5,718 tok/s, and TP1 FP8 at 2,101 tok/s. As mentioned within the FP8 notice above, the decrease FP8 numbers right here mirror the present state of Intel’s vLLM fork moderately than a {hardware} bottleneck.

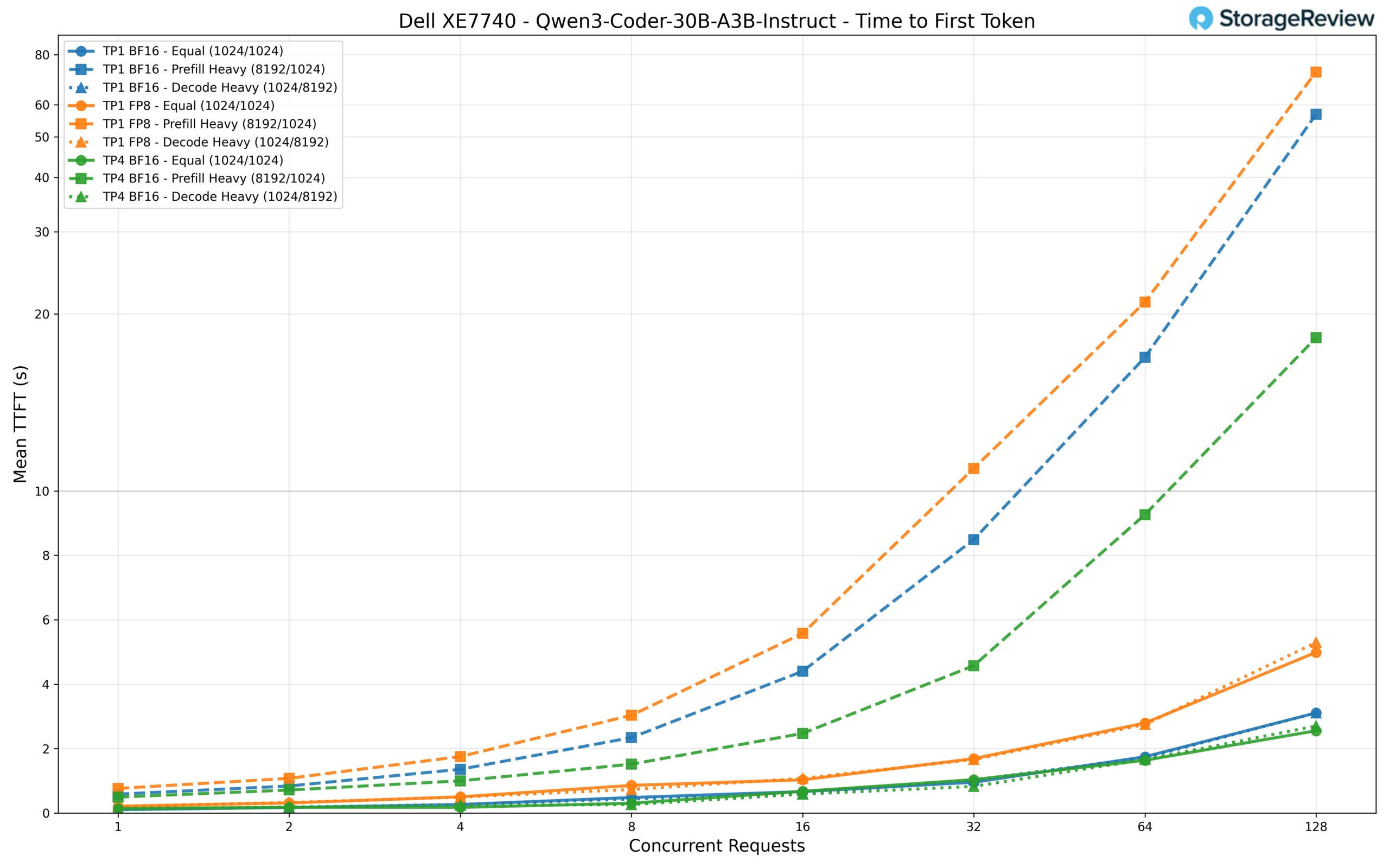

TTFT stays low because of the sparse activation sample. TP4 BF16 delivers first-token latency of round 140ms at single-user load and holds to roughly 2.6 seconds at 128 concurrent requests within the equal workload. TP1 BF16 is comparable at low concurrency (106ms) however climbs to three.1 seconds below full load. The prefill-heavy situation once more clearly differentiates the configurations: TP4 reaches about 18 seconds at 128 requests, TP1 BF16 hits 57 seconds, and TP1 FP8 extends to 72 seconds.

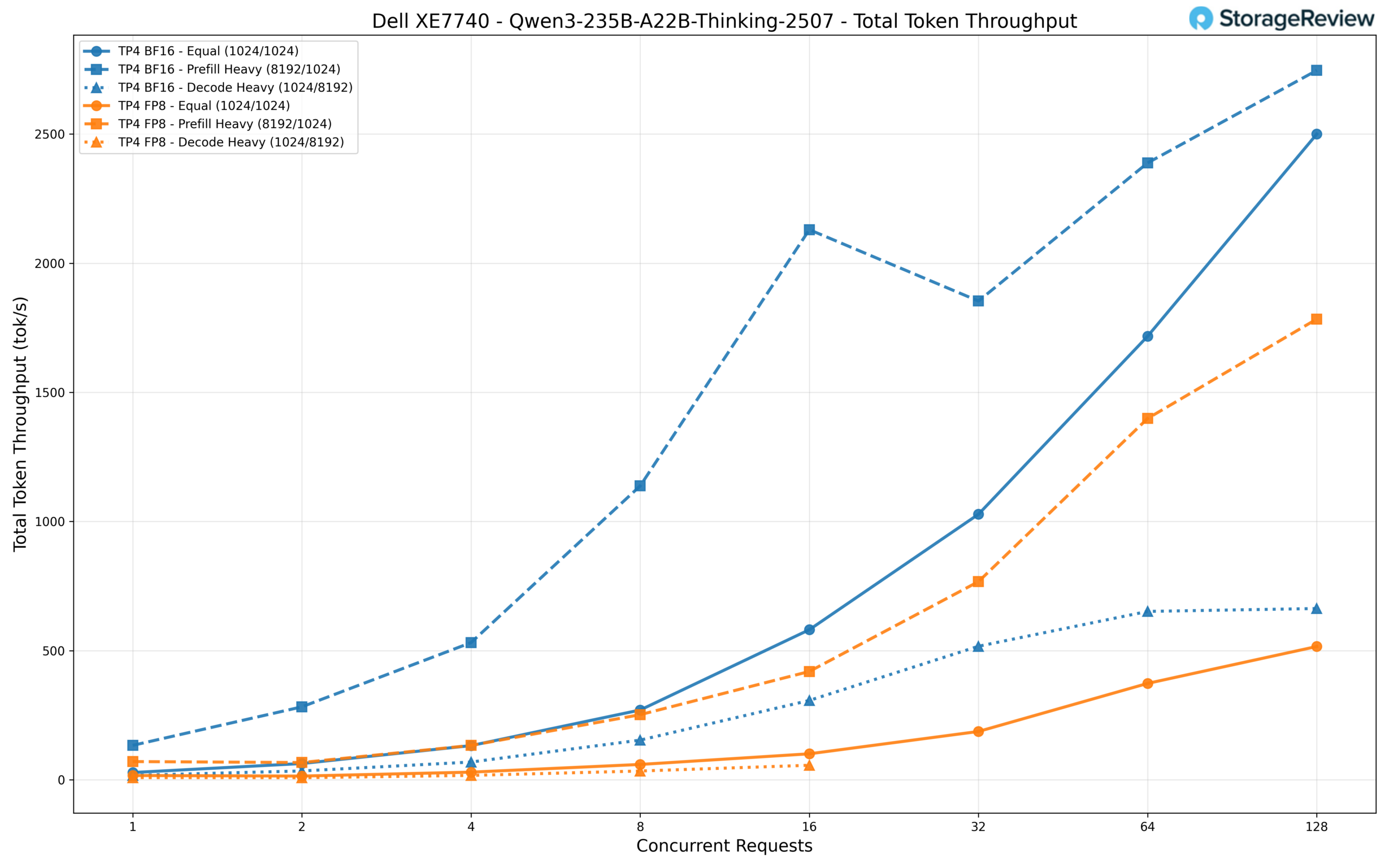

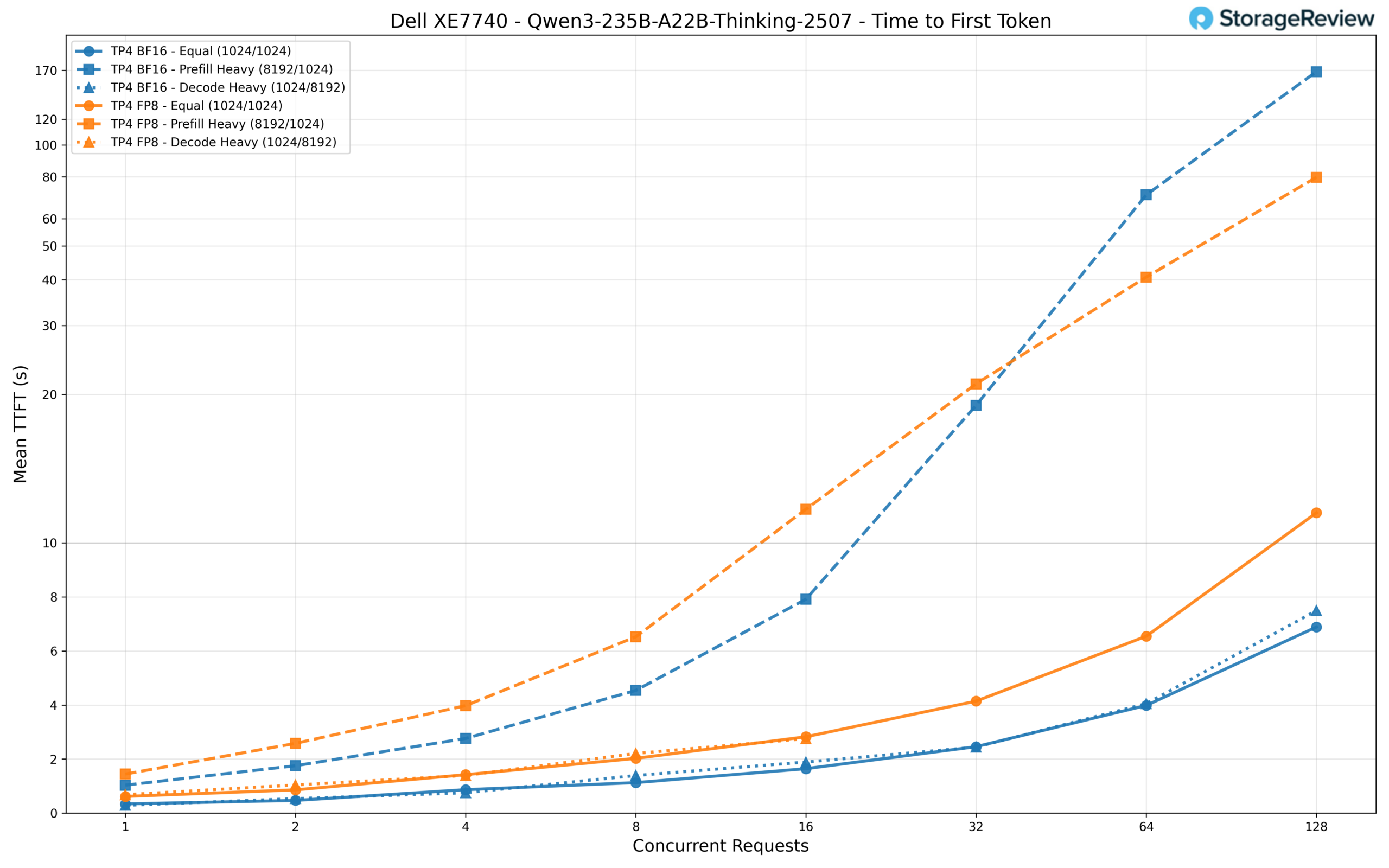

Qwen3 235B-A22B Considering

The biggest mannequin in our benchmark suite, Qwen3 235B-A22B Considering, is a large MoE reasoning mannequin with 235B whole parameters and 22B energetic parameters per token. Past its sheer scale, this mannequin contains built-in chain-of-thought reasoning capabilities, permitting it to interrupt down advanced issues step-by-step earlier than arriving at a solution at the price of extra decode tokens. This makes it notably well-suited for probably the most difficult duties: superior code technology and debugging, mathematical problem-solving, multi-step logical reasoning, and complicated, agentic workflows the place accuracy issues greater than uncooked pace. This mannequin requires TP4 to run, and we examined it in each BF16 and FP8 precision.

BF16 clearly outperforms FP8 throughout the board. At 128 concurrent requests, BF16 reaches roughly 2,750 tok/s within the prefill-heavy workload and a pair of,500 tok/s within the equal workload, whereas FP8 manages about 1,784 tok/s and 516 tok/s, respectively. The FP8 variant additionally skilled timeouts within the decode-heavy situation at larger concurrency ranges (32+ requests).

For TTFT, BF16 begins at round 340ms for a single equal-length request and scales to roughly 6.9 seconds at 128 concurrent requests. That is fairly responsive for a mannequin of this scale. FP8 is roughly 2x slower all through, starting at 615ms and reaching about 11.2 seconds at full load. The prefill-heavy workload is probably the most demanding situation, with BF16 climbing to 168 seconds and FP8 to 80 seconds at 128 requests.

Who is that this for?

Inference demand isn’t slowing down. Whether or not organizations are deploying fashions internally to speed up developer groups, embedding AI into customer-facing merchandise, or standing up automation pipelines that run across the clock, the compute necessities preserve rising. And with that demand comes a well-known bottleneck: procurement. Lead occasions on common accelerators can stretch for months, stalling initiatives which have already been funded and staffed.

The XE7740, configured with Intel Gaudi 3, addresses this constraint instantly. Gaudi 3 accelerators can be found now, and Intel offers validated deployment templates so groups can transfer from unboxing to serving inference in hours. Dell additional lowers the barrier with try-and-buy packages that place XE7740 techniques instantly in your atmosphere, letting you validate efficiency in opposition to your precise workloads, knowledge, and infrastructure earlier than committing to a full rollout. That mixture of speedy availability, quick time-to-value, and low-risk analysis makes the Gaudi 3 configuration notably engaging for organizations that want inference capability as we speak and can’t afford to attend on allocation queues.

That mentioned, the XE7740 isn’t a single-accelerator platform. With Dell’s dedication to silicon variety, the identical platform will be had with a wide range of choices and each common accelerator available on the market, and the suitable selection relies upon completely on the workload. Video processing pipelines, for instance, are a pure match for NVIDIA L4s, and the XE7740 will be geared up with 16 L4 GPUs alongside 8 PCIe Gen5 x16 NIC slots for a no-compromise streaming and transcoding system. Organizations operating combined AI workloads throughout departments can standardize on the XE7740 chassis and easily range the accelerator configuration to match every deployment, simplifying fleet administration whereas tailoring compute to the duty.

Conclusion

The Dell PowerEdge XE7740 is engineered for the realities of enterprise inference. Its dual-zone thermal design, structured PCIe topology, high-bandwidth reminiscence structure, and scale-out networking capability type a system constructed for sustained manufacturing workloads. These aren’t incidental design decisions; they mirror an infrastructure mannequin the place inference runs constantly, scales predictably, and integrates cleanly into present knowledge middle operations. On this analysis, Intel Gaudi 3 demonstrates that the XE7740 delivers inference as we speak throughout dense and MoE architectures, with predictable scaling conduct and powerful memory-bound throughput. Software program optimizations will proceed to enhance, however the platform’s architectural basis is already sound.

Extra importantly, the XE7740 establishes a sturdy blueprint for enterprise AI infrastructure. Organizations can standardize on a constant chassis, administration stack, and deployment mannequin whereas evolving accelerator technique over time. As fashions develop, workloads diversify, and inference turns into embedded extra deeply in enterprise operations, the necessity for steady, adaptable infrastructure will solely enhance. The PowerEdge XE7740 is positioned to fulfill that trajectory. It delivers the architectural steadiness, operational maturity, and growth headroom required for enterprise AI because it strikes from fast adoption to long-term integration.

This report is sponsored by Dell Applied sciences. All views and opinions expressed on this report are primarily based on our unbiased view of the product(s) into consideration.