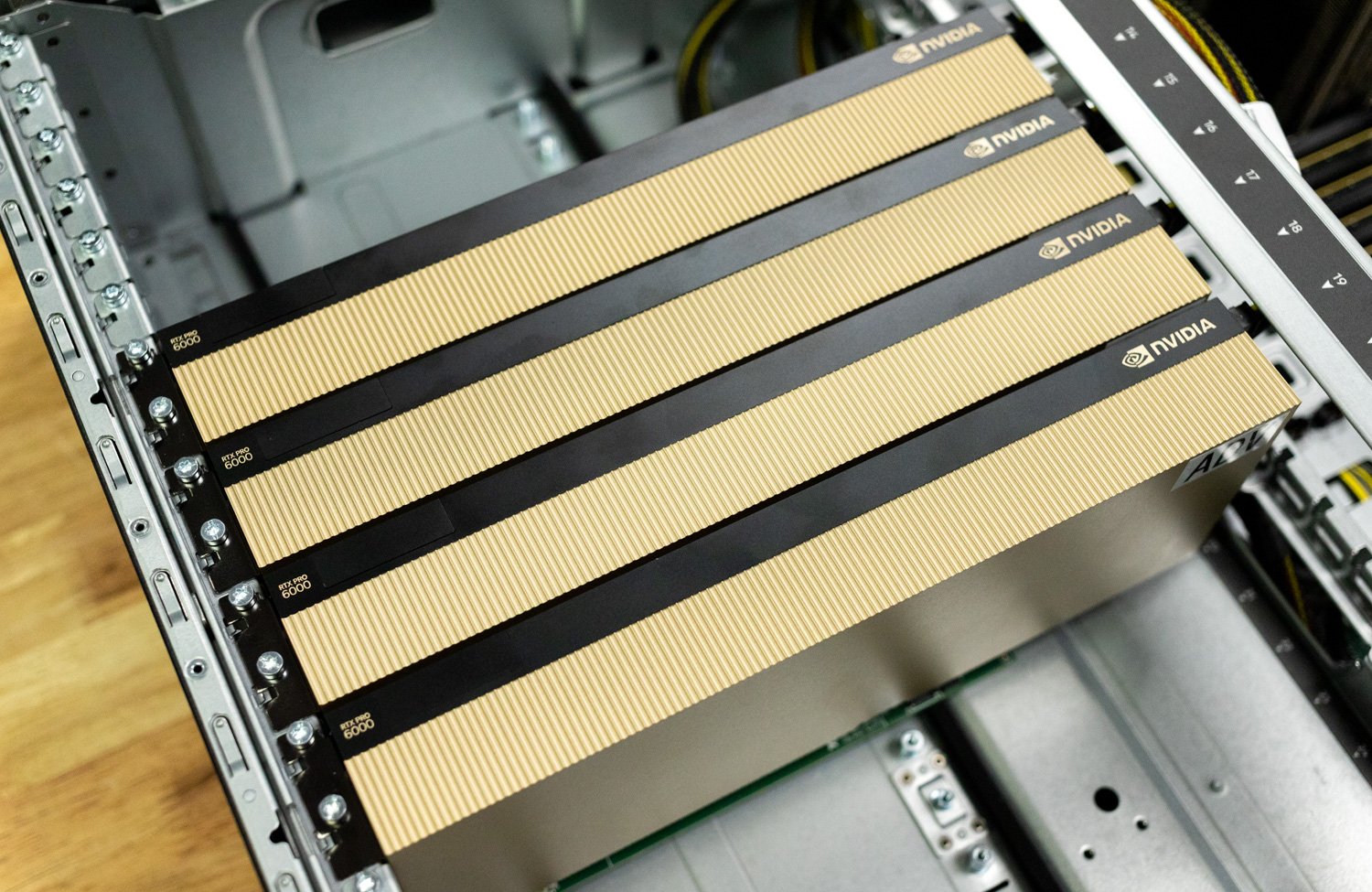

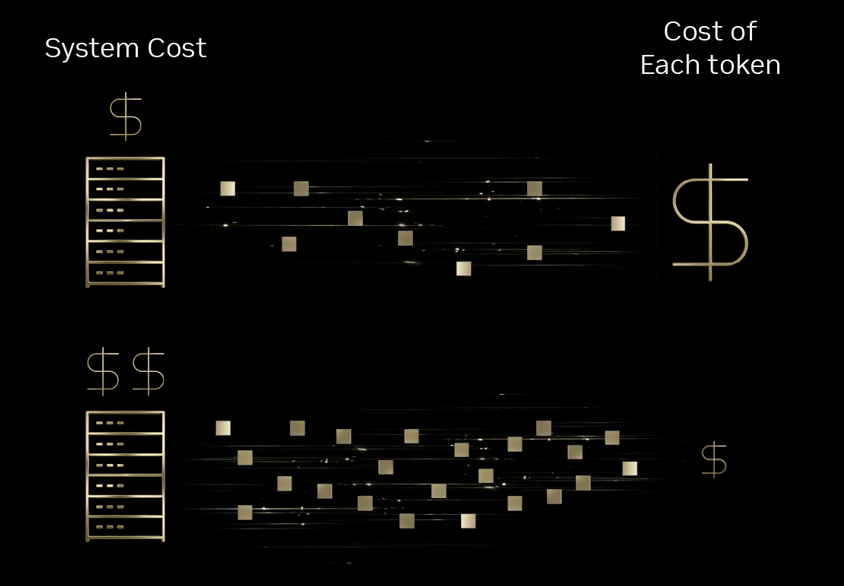

The elemental unit of intelligence in fashionable AI interactions is the token. Whether or not powering scientific diagnostics, interactive gaming dialogue, or autonomous customer support brokers, the scalability of those functions relies upon closely on tokenomics. Latest MIT Information point out that advances in infrastructure and algorithmic effectivity are decreasing inference prices by as much as 10x yearly. Main inference suppliers, together with Baseten, DeepInfra, Fireworks AI, and Collectively AI, at the moment are utilizing the NVIDIA Blackwell platform to attain these efficiencies, usually outperforming the earlier Hopper era by an order of magnitude.

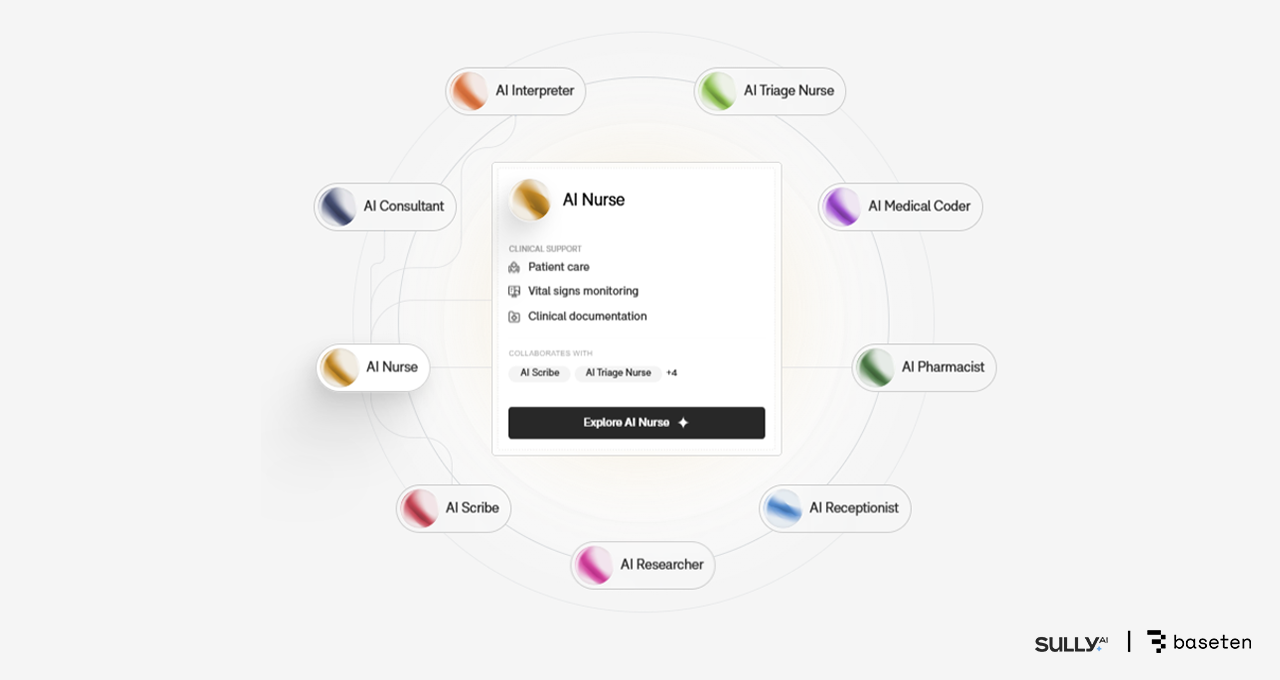

Healthcare Effectivity by way of Baseten and Sully.ai

Within the healthcare sector, administrative burdens corresponding to medical coding and documentation considerably detract from affected person care. Sully.ai addresses this by deploying AI brokers to automate these routine duties. Beforehand, the corporate confronted bottlenecks, together with unpredictable latency and inference prices that outpaced income progress when utilizing proprietary, closed-source fashions.

By migrating to Baseten’s Mannequin API, which makes use of open-source fashions on NVIDIA Blackwell GPUs, Sully.ai achieved a 90 p.c discount in inference prices. Baseten optimized the stack with the NVFP4 information format, TensorRT-LLM, and the NVIDIA Dynamo inference framework. The transition delivered 2.5x the throughput per greenback in contrast with Hopper and a 65% enchancment in response instances. So far, the implementation has reclaimed over 30 million minutes for physicians by automating handbook information entry.

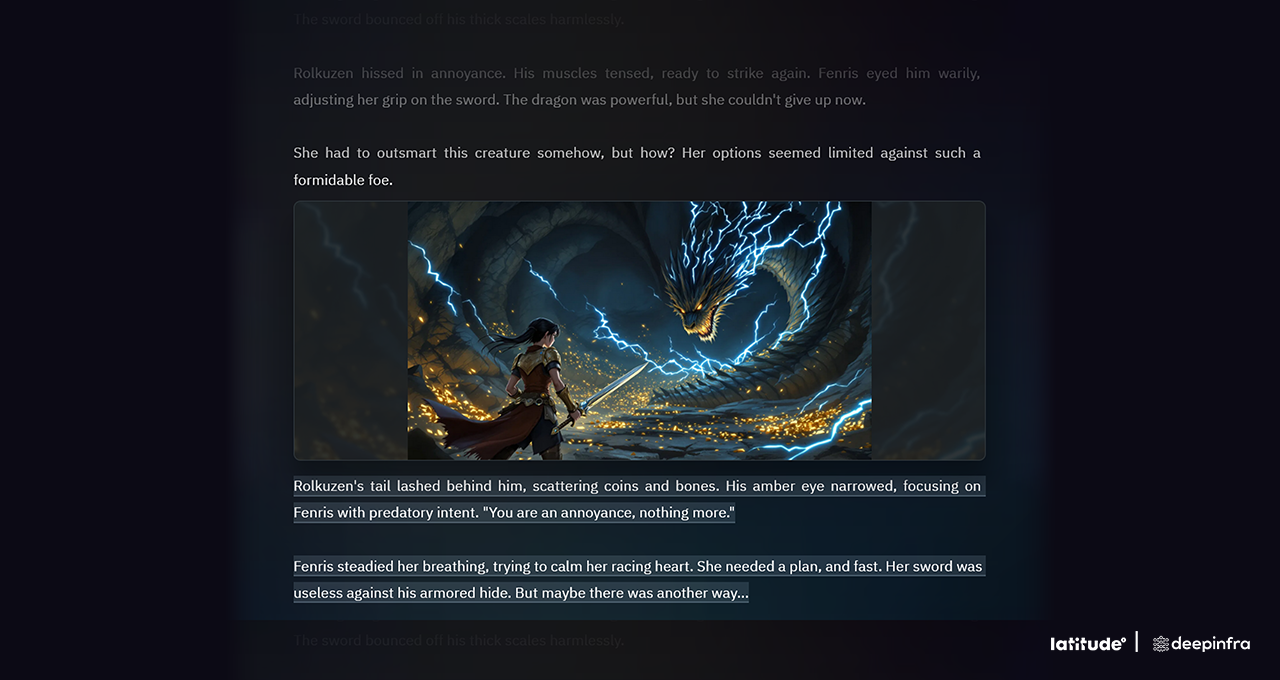

Gaming Efficiency with DeepInfra and Latitude

Latitude, the developer behind AI Dungeon and the Voyage RPG platform, faces distinctive scaling challenges as a result of each participant motion requires an inference request. Sustaining seamless gameplay requires low latency and cost-effective token supply. By operating large-scale Combination-of-Specialists (MoE) fashions on DeepInfra’s Blackwell-powered infrastructure, Latitude has achieved important value enhancements.

DeepInfra diminished the associated fee per million tokens from 20 cents on Hopper to 10 cents on Blackwell. By leveraging Blackwell’s native low-precision NVFP4 format, prices had been additional halved to five cents per million tokens. This 4x enchancment allows Latitude to deploy extra subtle fashions and deal with site visitors spikes with out compromising the consumer expertise or accuracy.

Scaling Agentic Workflows with Fireworks AI and Sentient

Sentient Labs develops open-source reasoning AI programs, corresponding to Sentient Chat, which orchestrates multi-agent workflows. These advanced interactions usually set off a cascade of autonomous duties, leading to important infrastructure overhead. Using Fireworks AI’s inference platform on NVIDIA Blackwell, Sentient achieved a 25 to 50 p.c enhance in value effectivity over Hopper-based deployments.

The elevated throughput per GPU enabled Sentient to deal with large concurrency. Throughout a viral launch part, the platform processed 1.8 million waitlisted customers inside 24 hours and managed 5.6 million queries in a single week. The Blackwell-optimized stack maintained persistently low latency regardless of the excessive question quantity.

Enterprise Voice Help by way of Collectively AI and Decagon

Decagon supplies AI brokers for enterprise buyer help, the place voice interactions require sub-second response instances to stay viable. Collectively AI hosts Decagon’s multimodel voice stack on NVIDIA Blackwell, implementing optimizations corresponding to speculative decoding and caching of repeated dialog components.

These technical refinements diminished response instances to beneath 400 milliseconds, even for queries involving 1000’s of tokens. By combining open-source and in-house fashions with Blackwell’s hardware-software co-design, Decagon diminished the associated fee per question by 6x in comparison with proprietary closed-source options.

The Way forward for Tokenomics

The transition to NVIDIA Blackwell, significantly the GB200 NVL72 system, marks a shift in how reasoning MoE fashions are deployed at scale. The platform’s skill to ship a 10x discount in value per token is a results of deep integration throughout compute, networking, and software program layers. Trying forward, the upcoming NVIDIA Rubin platform is predicted to proceed this trajectory, delivering an additional 10x enchancment in efficiency and token value effectivity in contrast with the Blackwell structure.