Google mother or father Alphabet is using a wave of investor confidence as its inventory hits document highs, fueled by Wall Avenue’s rising enthusiasm for the corporate’s customized AI chip technique. The momentum comes as Google’s Tensor Processing Items (TPUs) acquire traction past the corporate’s personal partitions, with experiences suggesting Meta is contemplating a serious shift to Google’s AI {hardware}.

This is not simply one other tech inventory rally – it is a basic reassessment of Google’s place within the AI infrastructure arms race. Alphabet shares have doubled in worth in seven months to $3.8 trillion as markets have grown extra assured within the search large’s potential to fend off the risk from ChatGPT proprietor OpenAI.

The catalyst for this newest surge seems to be Google’s aggressive push to commercialize its TPU know-how. In keeping with a number of experiences, Meta is contemplating utilizing Google’s tensor processing items, or TPUs, in its knowledge facilities in 2027, and might also hire TPUs from Google’s cloud unit subsequent yr, in response to The Data. This is able to mark a big departure from Meta’s present reliance on Nvidia {hardware} and signify a serious validation of Google’s chip technique.

Learn additionally – Nvidia’s ‘We’re Not Enron’ Memo By accident Revealed The Actual Threat

Google’s newest TPU iteration, Ironwood, represents the corporate’s strongest and energy-efficient customized silicon up to now, providing greater than 4X higher efficiency per chip for each coaching and inference workloads in comparison with Google’s earlier era, in response to Google Cloud. The chip presents greater than 4X higher efficiency per chip for each coaching and inference workloads in comparison with Google’s earlier era, making it significantly well-suited for the present business shift from coaching frontier fashions to powering high-volume, low-latency AI inference.

What’s significantly attention-grabbing about this improvement is the way it’s enjoying out within the broader market. When experiences of Meta’s potential TPU adoption surfaced, Nvidia shares fell as a lot as 7% earlier than recovering to commerce down 4%, whereas Alphabet shares climbed for a 3rd consecutive day. The market response suggests buyers see Google’s TPU technique as a reputable different to Nvidia’s dominance in AI {hardware}.

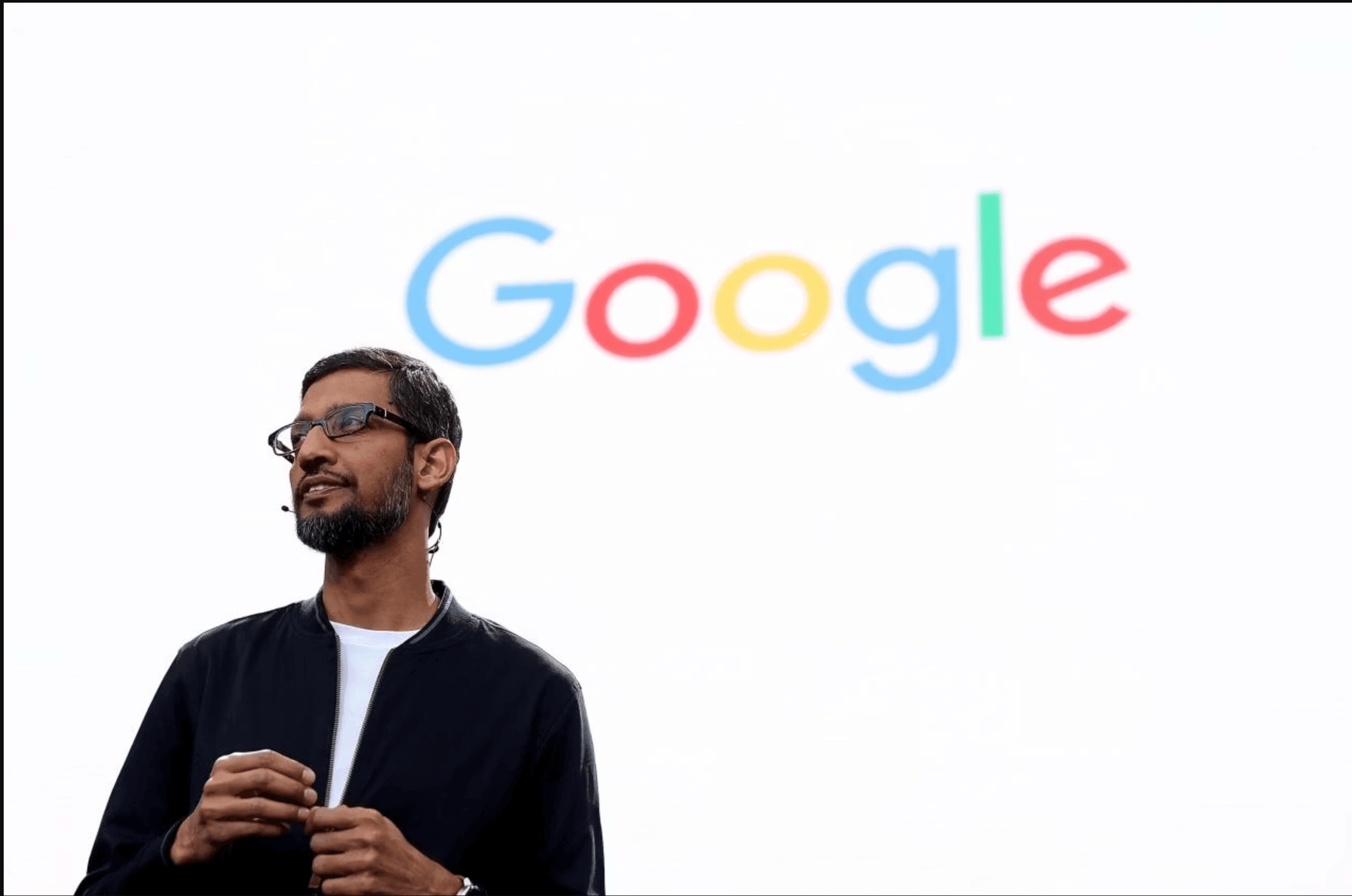

Google CEO Sundar Pichai has acknowledged the “components of irrationality” within the present AI funding growth whereas sustaining that the underlying know-how transformation is profound.

“We are able to look again on the web proper now. There was clearly numerous extra funding, however none of us would query whether or not the web was profound,” Pichai instructed the BBC. “I count on AI to be the identical. So I believe it is each rational and there are components of irrationality by means of a second like this.”

Learn additionally – Tech CEOs Discovered One Good Method to Make Themselves Irreplaceable to AI

The TPU story represents Google’s long-term wager on domain-specific structure. Not like Nvidia’s GPUs, which have been initially designed for graphics processing and later tailored for AI workloads, TPUs are application-specific built-in circuits (ASICs) designed from the bottom up for neural networks. This specialization permits them to excel at managing large calculations whereas minimizing the inner time required for knowledge to shuttle throughout the chip.

Google’s method to TPU commercialization seems to be gaining momentum past simply Meta. Anthropic plans to broaden its use of Google Cloud applied sciences, together with entry to as much as a million TPUs, in a deal price tens of billions of {dollars}, and in response to some Google Cloud executives, the Meta deal may generate income equal to as a lot as 10% of Nvidia’s present annual knowledge middle enterprise.

This is not Google’s first try and problem Nvidia’s dominance, but it surely may be essentially the most credible. The corporate launched its first-generation TPU in 2018, initially designed for inside use inside its cloud computing enterprise. Since then, Google has launched extra superior variations particularly engineered to deal with synthetic intelligence workloads, with every era exhibiting vital efficiency enhancements.

The timing of this TPU push aligns with broader business tendencies. As AI fashions develop into extra refined and inference workloads develop exponentially, firms are on the lookout for extra environment friendly options to conventional GPU architectures. Google’s TPUs, with their specialised options just like the matrix multiply unit (MXU) and proprietary interconnect topology, supply potential benefits for particular AI frameworks and high-throughput matrix operations central to giant language fashions.

Nvidia hasn’t taken this problem calmly. The corporate took the weird step of posting on X to defend its place, stating: “We’re delighted by Google’s success – they’ve made nice advances in AI, and we proceed to produce to Google. NVIDIA is a era forward of the business – it is the one platform that runs each AI mannequin and does it all over the place computing is completed.”

For Google, the TPU technique represents greater than only a {hardware} play, it is about constructing a whole AI ecosystem. The corporate’s full-stack method consists of not simply the chips themselves but additionally the software program infrastructure, with instruments just like the XLA compiler and JAX framework designed to work seamlessly with TPU structure.

Because the AI infrastructure battle intensifies, Google’s inventory efficiency suggests buyers are betting that the corporate’s decade-long funding in customized silicon is lastly paying off. Whether or not TPUs can really problem Nvidia’s ecosystem dominance stays to be seen, however for now, Wall Avenue seems satisfied that Google has discovered its footing within the AI {hardware} race.